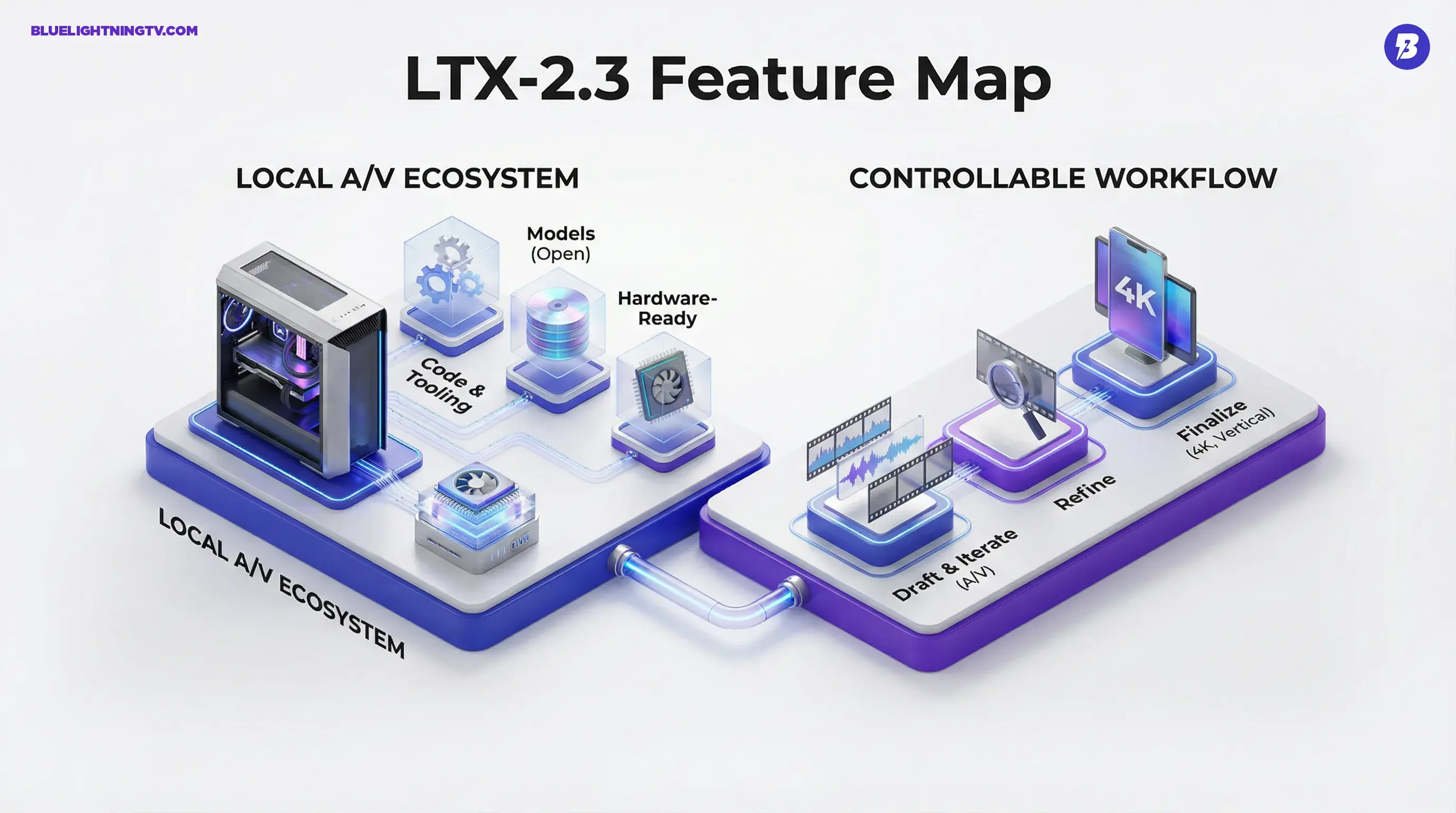

Lightricks just open-sourced LTX-2 on GitHub, pushing one of the most practical versions of “AI video on your own machine” we have seen in a while. This is not another cloud demo that makes you wait in a queue for a silent eight-second clip. LTX-2.3 is positioned as a production-ready, local-first audio plus video model with a real ecosystem behind it: code, tooling, and model checkpoints you can actually work with.

That combination matters because creators do not just need a cooler render. They need repeatable output, tight iteration loops, and fewer points where the workflow collapses into “okay, now export and rebuild everything in three other apps.” LTX-2.3’s big swing is treating sound as a first-class citizen while keeping the release open enough to fit into how people already produce.

What shipped this time

LTX-2.3 is the latest iteration of Lightricks’ LTX-2 line: a generative model designed to output video with native, synchronized audio. Lightricks is leaning hard into “complete media draft,” not “moving pixels that still need a full audio pass.” The official LTX-2 site frames it as open-source, local audio-video generation with up-to-4K output and creator-hardware-minded performance modes (LTX-2 site).

Two notable distribution points for anyone who wants to kick the tires:

- Model checkpoints: Hugging Face (Lightricks/LTX-2.3)

- Tooling and pipelines: the GitHub repo includes inference code and training utilities, including a LoRA trainer (GitHub (LTX-2))

The creative unlock is not that it generates audio. It is that the audio is generated as part of the same artifact: your first draft plays like a scene, not a silent concept clip you have to finish elsewhere.

Why local changes incentives

“Runs locally” can sound like a nerd flex until you have sat through the realities of cloud video: rate limits, queue times, pricing that punishes iteration, and the always-present question of what can (and cannot) leave your machine.

LTX-2.3 flips the incentives in a few creator-relevant ways:

- Iteration gets cheaper: when renders are not metered per attempt, creators experiment more. That is basic survival math for social teams and agencies.

- Privacy gets simpler: keeping drafts and source material on-device can reduce the “can we even use this tool?” friction for client work.

- Workflows get versionable: open tooling makes it easier to lock a pipeline to a specific model version instead of waking up to “the model changed and now the brand face looks different.”

None of that automatically makes outputs better than the best closed systems. But it does make the process more controllable, which is usually what pros actually mean when they say “production-ready.”

What creators can expect

Lightricks is marketing LTX-2.3 around high resolution, speed, and audiovisual generation. The creator-facing headline features being discussed around the release include up to 4K output, high frame rates (up to 50 fps), an updated autoencoder (VAE) pipeline for sharper detail, and native vertical (9:16) support rather than “crop it later” workarounds. Third-party coverage has called out sharper textures and native vertical support as key improvements (VP-Land overview).

Instead of treating this as a spec war, here is the more useful framing: LTX-2.3 is trying to make the unit of output closer to something you can cut into an edit.

| What you get | What it replaces | Why it matters |

|---|---|---|

| Audio + video together | Silent generations + separate sound pass | Drafts communicate intent faster |

| Local inference | Queues, credits, throttles | More iterations, fewer blockers |

| Open tooling | Locked web UI workflows | Integrates with real pipelines |

What is actually new

If you have been tracking LTX-2 since the earlier open release, LTX-2.3 reads less like “new category” and more like “tighten the bolts where creators feel pain.” Early breakdowns emphasize:

- Sharper detail retention: especially on faces, textures, and small objects where earlier generations could go soft.

- Better prompt adherence: fewer “cool, but not what I asked for” moments.

- Vertical-native workflows: because yes, we live in a 9:16 world.

- Performance tuning: faster variants aimed at fewer steps and lower VRAM pressure so local use feels plausible.

There is also a wider ecosystem play: Lightricks is not just dropping a checkpoint and walking away. The repo is structured like something meant to be built on: pipelines, trainers, and integration points. That is the difference between “open” as a vibe and open as a workflow.

COEY context: this fits a bigger shift

If you want the earlier COEY take on why synced audio changes the whole “first draft” equation for local AI video, see LTX-2 Brings Local AI Video With Synced Audio.

Licensing: read the fine print

Here is the pragmatic moment that creators and small studios should not skip: “open-source” can mean different things for code versus weights.

In LTX-2.3’s case, the code is released under Apache 2.0 on GitHub. The weights on Hugging Face are governed by the license terms published on the model card. Lightricks also summarized its “open weights” positioning in its announcement materials (GlobeNewswire release).

Translation: for many indie creators and small teams, this will likely feel effectively open. For bigger shops, it is still promising, but it becomes a procurement conversation, not just a Git clone.

Where this lands in production

LTX-2.3 does not replace an editor, a sound designer, and a motion team. But it does change what the “first draft” can be, and that shifts timelines.

Concepts that actually play

When audio is included, a concept clip can communicate pacing and emotional tone faster. That is huge for ad concepts, pitch boards, and social spots where timing is the message.

Variant-heavy social cycles

Short-form teams do not need one perfect take. They need ten good ones by lunch, plus alternates for localization, hooks, and different CTAs. Local inference makes that kind of volume less painful.

Private client workflows

For teams that simply cannot put assets into a hosted tool, local execution plus available weights creates an on-ramp to generative video without re-architecting compliance.

Limits worth respecting

This is the part where we keep it real. LTX-2.3 is a meaningful release, but creators should walk in with expectations that match how generative video works today.

- Short clips remain the unit: most high-quality gen video is still best treated as shots you stitch, not endless scenes you prompt once.

- Audio is the hardest part: “synchronized audio” does not automatically mean clean dialogue or broadcast-ready sound design. It means your draft has audio generated alongside the visuals.

- Local means you own the setup: no queues, but also no magical IT fairy. Drivers, VRAM, dependencies.

The bigger signal is what this normalizes: open, local, audiovisual generation as a baseline expectation. If LTX-2.3 holds up across real creator workloads, it will push the whole category toward tools that behave less like slot machines and more like production systems.