OpenAI just expanded the GPT 5.4 lineup with two smaller variants, GPT 5.4 Mini and GPT 5.4 Nano, aimed at the work most creator teams actually do: high volume drafts, quick rewrites, routing, tagging, and a thousand tiny make this usable steps that eat your day.

If you want the cleanest source of truth for what is live right now, start with OpenAI’s pricing page: OpenAI API pricing.

This is not a new frontier model moment. Its a throughput moment, the same energy as seeing your favorite editing app finally add batch tools. Not glamorous, extremely impactful.

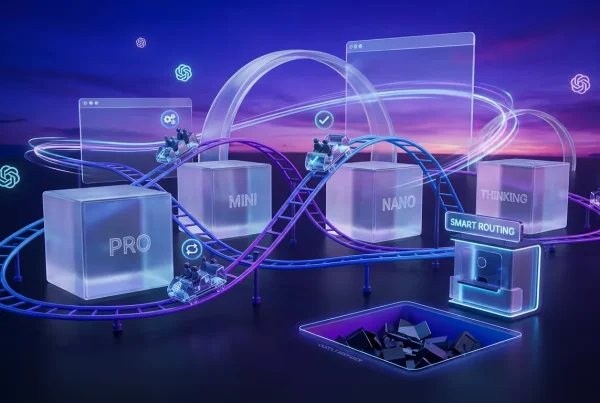

The real news: OpenAI is pushing model choice down into the workflow layer, so which model you run becomes as normal as which template or which export preset.

What actually shipped

OpenAI’s framing is straightforward: Mini is the daily driver that stays capable while cutting latency and cost; Nano is the ultra light option meant to live inside pipelines where you need instant answers and you are allergic to unnecessary spend.

If you want the broader context on GPT 5.4’s release and how OpenAI is positioning it for long context and agent workflows, see our earlier breakdown: GPT-5.4: 1M Context and Real Agent Workflows.

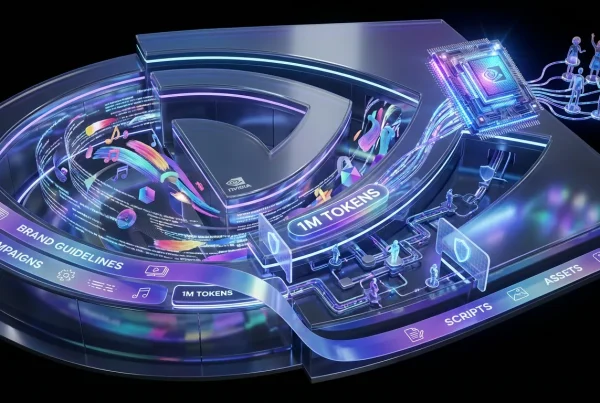

These smaller variants matter because most teams should not be paying flagship prices to do flagship thought tasks. A huge percentage of AI work in creative operations is repetitive:

- turning briefs into structured outlines

- generating 30 variations of a hook

- formatting copy into channel specs

- extracting product attributes from messy text

- checking style rules and banned phrases

- routing tasks based on content type

That is the lane Mini and Nano are designed to own.

Mini: the new default

GPT 5.4 Mini is positioned as the model you can keep running all day without the do not look at the token bill anxiety. It is meant for real writing work at scale, not just classification and not just toy prompts.

What Mini is optimized for

- Fast iteration loops: more give me 12 options cycles per hour

- Brand safe drafting: enough capability to follow voice rules and formatting constraints

- Batch workflows: ideal for generating sets where consistency matters

- Tool use: designed to work inside tool using workflows like structured outputs and multi step tasks

If your team uses AI like a creative assembly line, draft to refine to format to ship, Mini is the model that makes that assembly line less expensive and less sluggish.

The practical tradeoff

Mini will not feel like the deep thinking mode you would use for gnarly strategy synthesis or high stakes reasoning. That is the point. It is meant to be good enough, fast enough, cheap enough that you stop rationing attempts.

Nano: the pipeline gremlin (affectionate)

GPT 5.4 Nano is the model you hide inside your automations so they quietly stop breaking. Nano is for low latency, low cost text intelligence. It does not need to be poetic, it needs to be correct and fast.

Where Nano fits best

- Classification: tagging, routing, label assignment

- Extraction: pull fields, normalize data, convert messy inputs to structured outputs

- Validation: does this comply with our rules checks

- Ranking: pick best headline candidates, score options against constraints

- Triage: detect intent and send work to the right system

Nano is what you use when the question is not can AI do this but can AI do this 50,000 times today without becoming the bottleneck

Availability reality

OpenAI rolls models across ChatGPT and the API in a tiered way, and historically features land unevenly across surfaces at first. The key operational point is simple: Mini and Nano give teams more routing options, and routing is where cost control and speed improvements actually happen.

For current GPT 5.4 pricing, plus any caching related rates OpenAI lists, the reference is here: OpenAI API pricing.

At launch, the widely shared pricing is:

- GPT 5.4 Mini: $0.75 per 1M input tokens, $4.50 per 1M output tokens

- GPT 5.4 Nano: $0.20 per 1M input tokens, $1.25 per 1M output tokens

Why this changes content ops

Most teams are not blocked by we need a smarter model. They are blocked by:

- slow generation cycles

- expensive high volume runs

- fragile automations

- inconsistent formatting and compliance

- too many human minutes spent doing glue work

Mini and Nano target those bottlenecks directly. They do not promise magic. They promise capacity.

Model routing becomes normal

The big shift is cultural: teams will increasingly treat models like tools in a kit, not a single AI brain you use for everything.

That looks like:

- Nano handles intake, cleanup, tagging, compliance checks

- Mini handles drafts, rewrites, batch variants, multi channel formatting

- Full GPT 5.4 and deeper variants handle complex synthesis, planning, and high risk outputs

That is a grown up workflow. Also it is how you keep AI helpful without letting costs quietly mutate into a new line item nobody wants to explain.

Mini vs Nano at a glance

| Need | Use Mini | Use Nano |

|---|---|---|

| Campaign copy variants | Yes (fast drafting) | No (too lightweight) |

| Tagging and routing | Sometimes | Yes (cheap plus fast) |

| Format conversions | Yes (channel ready) | Yes (if simple) |

| Compliance checks | Yes (nuanced rules) | Yes (basic rules) |

| High stakes reasoning | Maybe | No |

The pragmatic implications

1) Faster iteration wins (again)

Lower cost models do not just save money. They change behavior. When generations are cheap enough, teams test more angles, explore more hooks, and get to the good version sooner.

2) QA becomes scalable

Nano especially makes it easier to run constant checks, tone rules, banned terms, formatting requirements, without assigning a human to be the last line of defense for every asset.

3) Automations get less precious

When every step uses the same expensive model, teams avoid building multi step systems because each run feels like lighting money on fire. Mini and Nano make multi stage automation more realistic.

If you are mapping how routing and throughput show up across GPT 5.4’s broader release, this companion post connects well: GPT-5.4 Makes AI Production Work Actually Flow.

What to watch next

A few things will determine whether Mini and Nano become true staples or just nice options:

- Consistency under load: do they stay reliable in batch runs

- Tool use reliability: especially for Mini in multi step flows

- Clear routing patterns: teams that document what goes where will benefit fastest

- Latency stability: Nano’s value collapses if response times wobble in real production

OpenAI’s broader direction with GPT 5.4 has been about making AI behave more like a system component than a novelty chat box. Mini and Nano continue that trajectory: less hype, more knobs. For creators shipping at volume, that is the kind of upgrade you feel in your calendar, not your timeline.