OpenAI is rolling out an upgrade to ChatGPT’s image generator that targets a creator pain point: getting complex scenes to stay coherent and getting text to stop melting. The most reliable anchor for what OpenAI considers the new image experience is its ChatGPT Images announcement, which frames the focus as stronger instruction following, faster iteration, and better fidelity in edits and details, including text (OpenAI: The new ChatGPT Images is here).

This rollout also fits a pattern we’ve been tracking on Blue Lightning: OpenAI’s next image leap looks less like new styles and more like new control. When the tape codenames popped up in public testing, the recurring signal was composition discipline and more usable typography. That earlier moment mattered because it suggested OpenAI was optimizing for commercial grade reliability, not gallery vibes (see OpenAI Tape Leak: Real Gains for Image Creators).

What’s shipping now looks like that direction getting productized inside ChatGPT: stronger instruction following for multi element composition plus improved text rendering.

The quiet shift here is simple: image generation is moving from hit reroll until it behaves to iterate like an art director.

What actually changed

OpenAI has not packaged this as a giant new model name moment in the interface for everyone at once. Instead, creators are noticing the change the way you notice a new phone camera: you stop fighting it as much.

The improvements cluster around two areas that matter in real production:

- Scene compositing and spatial control

- Typography and text placement consistency

That pairing is what turns AI images from cool concept frames into assets you can actually ship, especially for ads, thumbnails, decks, UI mockups, and product storytelling.

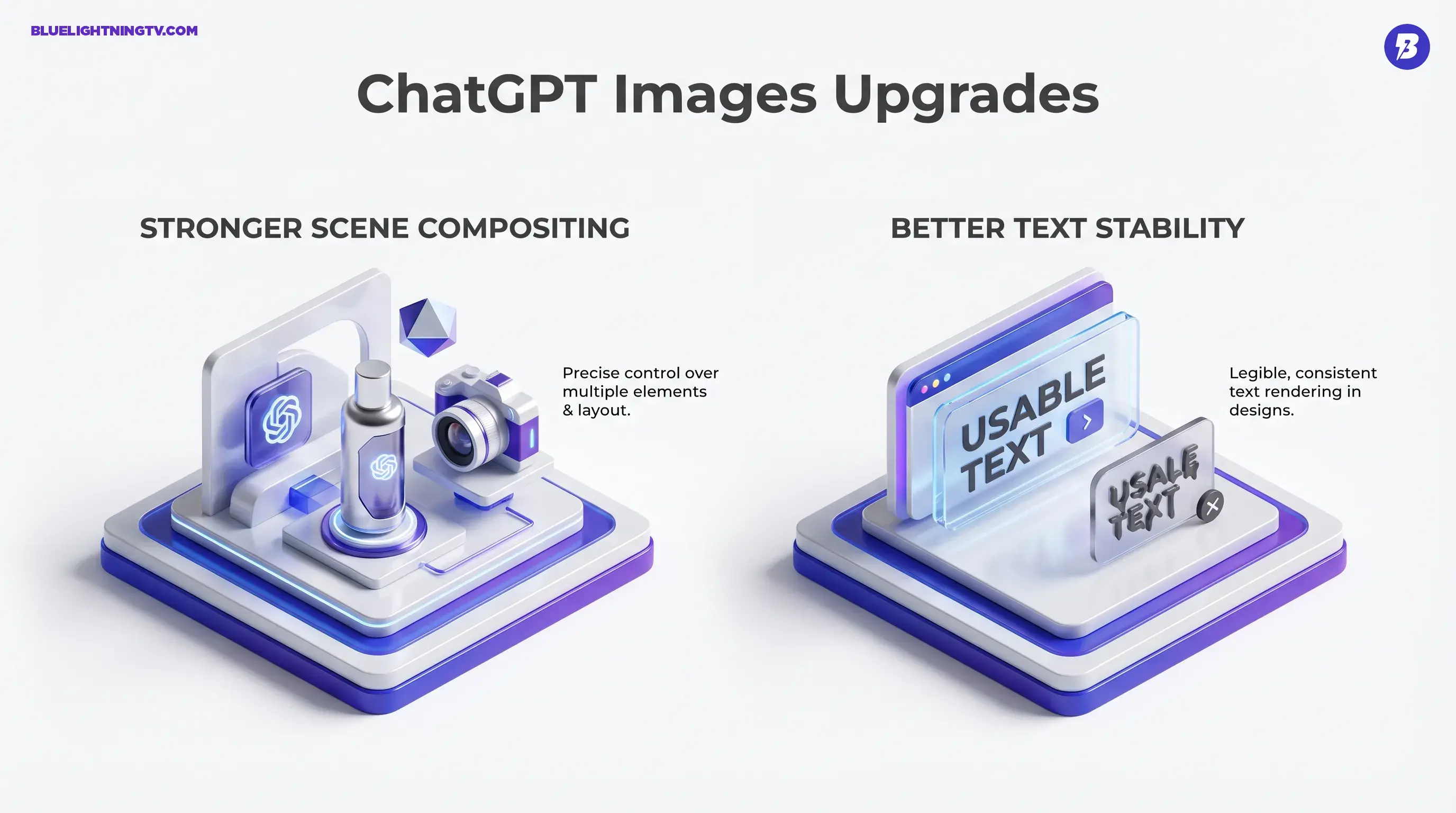

Stronger scene compositing

This upgrade is best understood as the model getting better at tracking multiple constraints in a single frame: subjects, props, layout, depth, foreground background separation, and relative placement.

In earlier generations, asking for three products, one hero, one supporting prop, logo top right often resulted in objects fusing together, scale drift, or the model forgetting half the brief. Now, more creators are reporting outputs where:

- Objects keep their roles

- Spacing behaves

- Perspective is less chaotic

In practical terms, scene control does not mean you are placing objects with a drag tool like Photoshop. It means your language controls the composition more reliably, especially when you provide explicit spatial instructions.

Better text stability

Text in AI images has historically been the final boss. OpenAI’s own framing of its newer ChatGPT Images experience includes improved detail fidelity, stronger instruction adherence, and clearer in image text (OpenAI: The new ChatGPT Images is here). What creators care about is simpler:

Can I get usable text without rebuilding it in Figma?

Early signs: more often than before, yes, at least for short, high contrast, clearly specified copy like titles, button labels, simple taglines, and signage.

Who benefits first

This update is not equally exciting for everyone. The biggest winners are creators whose images must behave like designed artifacts with layout logic, hierarchy, and brand constraints.

Social and thumbnail teams

Better scene control plus better text means:

- Fewer rerolls to land a readable hook

- Better control over subject, headline, and negative space

- Faster A B concepts without opening five apps

Marketing and brand studios

For marketing, the win is not prettier output. It is less cleanup.

If the image generator can hold a multi object composition and keep a tagline legible, it becomes viable for:

- Ad concept mockups

- Product banners

- Landing page hero explorations

- Pitch deck visuals that do not embarrass you in front of stakeholders

Product and UI storytelling

UI mockups are where scene control and text consistency collide. When the model can generate something that resembles a real interface with stable labels and buttons, you get faster prototyping and better visual communication, even if the output still needs finishing in Figma.

What it changes in workflow

The key operational shift is that prompting becomes less like gambling. You still iterate, but iteration starts to feel more like creative direction and less like wrestling.

Fewer rerolls per usable image

If you generate images for work, your real cost is per usable image. Anything that increases first pass usability changes throughput immediately.

| Creator task | Old bottleneck | What improves now |

|---|---|---|

| Multi object scenes | Elements merge or drift | Better separation plus placement |

| Text forward designs | Gibberish typography | More legible short text |

| UI like mock visuals | Labels melt on reroll | Better text persistence |

| Brand mockups | Prompt misses constraints | Higher prompt adherence |

More predictable compositional prompting

When the model reliably respects placement cues, creators can write prompts closer to a real brief:

- Leave clean negative space top left for copy

- Place product centered, supporting props small and secondary

- Logo top right, do not distort

Rollout reality

OpenAI image updates tend to arrive in waves. Some accounts see it early, others later, and access often varies by tier, platform, and region.

As of this week, creators on X are reporting a visible ChatGPT Images refresh, including better text and composition, rolling out unevenly with rate limits that can trigger cooldowns during heavy use.

If you are trying to confirm whether you have the upgraded behavior, the most honest test is not does it look better, but:

- Can it hold a complex layout with multiple objects

- Can it render short text that stays readable

- Does it obey spatial instructions more consistently

What to watch next

This rollout is part of a bigger shift in image generation: the battleground is moving from style wins to control wins.

We saw the early hint of this in the tape testing chatter where control and text were the loudest signals, not aesthetics (again, OpenAI Tape Leak: Real Gains for Image Creators). And we have already seen adjacent ecosystem moves like Adobe adding OpenAI’s GPT Image 1.5 as a selectable partner model inside Firefly, reinforcing that teams want the right engine inside the right workflow surface (Adobe Firefly: GPT 1.5 partner model).

Here’s the pragmatic read: if OpenAI keeps improving scene control and text stability, image generation becomes less AI art and more visual production infrastructure. The kind that cranks out ad variants, thumbnail comps, and product storytelling frames at speed.

The magic isn’t that it can make images. The magic is that it can make images that follow the brief.