ByteDance’s Seedance 2.0 is the latest reminder that generative video isn’t chasing “cool clips” anymore. It is chasing usable scenes. Early demos and reporting show a jump in realism, motion stability, and multi-input control that makes the output feel closer to an ad draft than a tech demo. A solid starting point on what launched and what it claims to do is ForkLog’s overview of Seedance 2.0.

This is news, not a fairy tale: Seedance 2.0 looks legitimately stronger than v1, but it’s also triggering immediate operational pressure around likeness and IP. In other words, it’s getting good enough that teams can ship faster and risky enough that teams can’t be casual anymore.

What actually changed

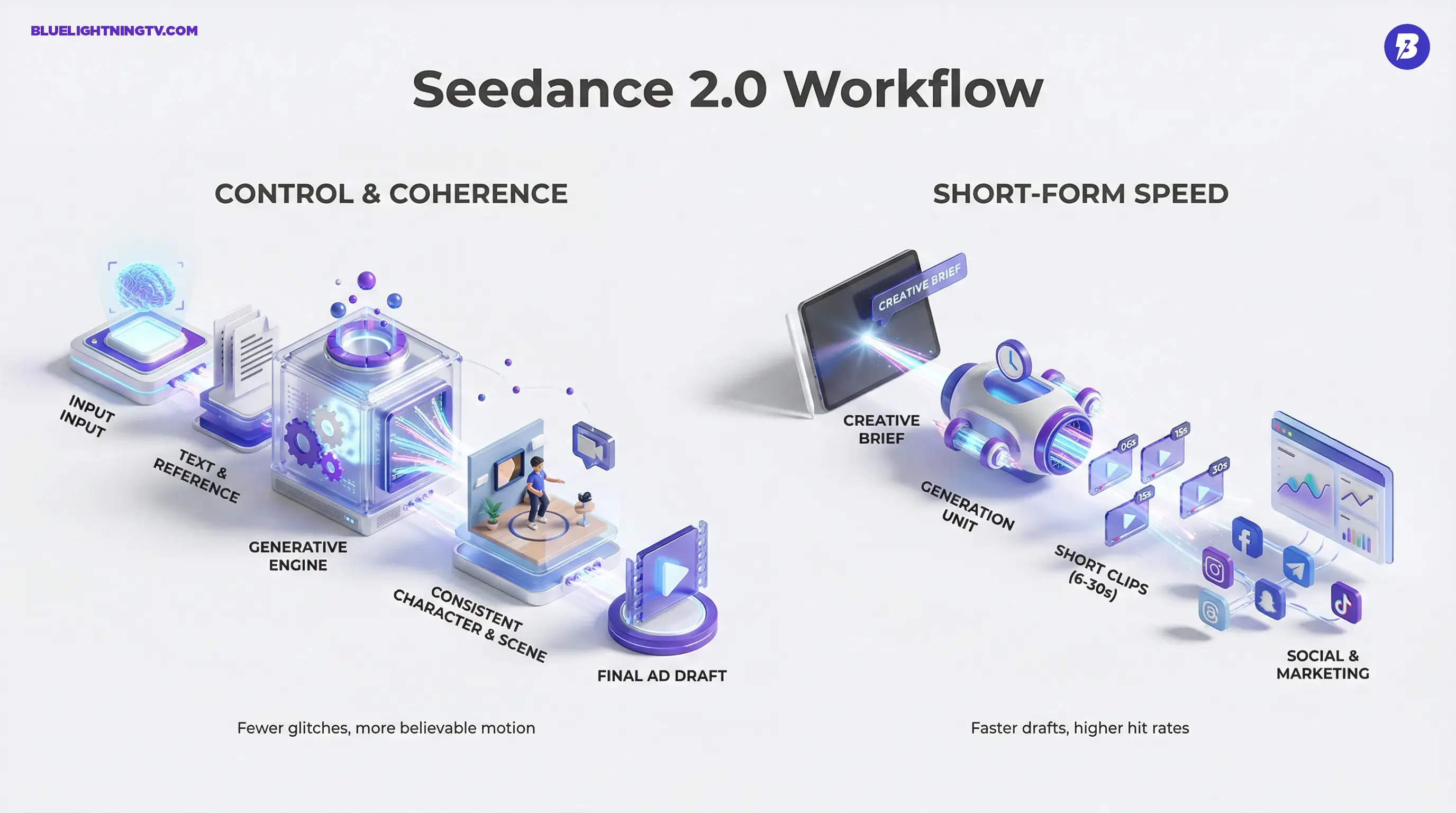

Seedance 2.0 is being presented (and behaving, in demos) as more than “text-to-video with better pixels.” The bigger story is control + coherence, which is what separates a fun generator from a tool you can put inside a real content pipeline.

Higher fidelity, fewer glitches

Creators watching the shared clips are reacting to three practical improvements:

- More consistent subjects: less face drift, fewer wardrobe “shape-shifts,” less mid-shot identity roulette.

- More believable motion: camera moves and body motion feel less like the model is fighting physics.

- Cleaner scene stability: fewer “melty” artifacts and fewer sudden continuity resets that kill editability.

The quiet benchmark shift: the question is no longer “does it look cinematic?”

It’s “can I revise it without it falling apart?”

Multi-input direction, not just prompts

Seedance 2.0’s positioning is strongly “directorial”: text prompts plus references to anchor results. That matters because reference is how teams keep a brand look from drifting when output volume goes up.

In practice, Seedance 2.0 is showing signs of supporting:

- Text-to-video for fast concepting

- Image and video reference to preserve character, environment, and framing intent

- Native multi-shot generation (moving toward sequences, not just isolated clips)

The key implication: you can hand Seedance something closer to a creative brief, not a prayer.

Why creators care now

The most valuable upgrades in gen video aren’t “more style.” They’re less rework. Seedance 2.0 is trending in the direction creators actually pay for: fewer takes wasted on basic continuity failures.

Short-form wins first

Seedance 2.0’s sweet spot, based on how it’s being demoed and discussed, looks like short clips in the roughly 6 to 30 second range where speed matters most and perfection is less important than “good enough to ship (or pitch).”

That makes it instantly relevant to:

- performance marketers doing variant testing

- agencies pitching concepts that need to feel real in-room

- social teams that live in weekly (or daily) output cycles

- creators building repeatable series formats

The “draft-to-edit” gap shrinks

The practical leap isn’t that it replaces production. It’s that it produces a first pass that behaves more like footage:

- clearer shot intent (framing, lighting, tone)

- fewer continuity dealbreakers

- better odds you can cut it into a timeline without hiding it behind motion blur and vibes

If you’ve been using gen video as storyboards, Seedance 2.0 is pushing toward animatics that can pass as rough cuts.

How it stacks up

Seedance 2.0 lands in a crowded field where the real competition is: who gets you to a reviewable scene fastest. The category leaders have been converging on the same pillars: reference control, multi-shot structure, and in some tools native audio.

Also worth noting: this is landing while tools like Kling are pushing native audio as the workflow upgrade (sound makes a clip feel “finished” fast). If you want that context from our own coverage, see Kling 3.0 Native Audio Could Change AI Video.

Access is the product

Seedance 2.0’s rollout story matters because distribution shapes who actually benefits. As of 2026-02-16, reporting and creator posts indicate it remains China-first and tightly controlled, with access largely routed through ByteDance surfaces such as Dreamina (also associated with Jimeng in some coverage), plus limited partner or beta pathways. One public-facing summary of availability points to ByteDance apps and the China-first constraint: Seedance 2.0 availability notes.

Two practical realities for teams:

- Early access means uneven expectations: feature sets, export options, queues, and watermark rules can change fast.

- Tooling maturity isn’t just the model: the workflow UI, versioning, and review surfaces determine whether the model becomes “daily driver” or “cool experiment.”

The compliance squeeze

We’re not covering policy debates here, but we are covering what affects creator workflows right now. And Seedance 2.0 is already colliding with rights enforcement at full speed.

IP and likeness pressure lands immediately

Major entertainment players are publicly pushing back, including coverage of industry groups condemning the tool’s outputs and training implications: AP News coverage of Hollywood’s response. There’s also reporting that Disney sent a cease-and-desist letter tied to Seedance 2.0 concerns: Axios report on Disney’s letter.

Even if you never touch a celebrity prompt, the operational consequence is broader:

- Platforms will get stricter faster: detection and takedown pipelines improve when a tool goes mainstream.

- Clients will ask new questions: “What references did you use?” becomes a standard line item, not a legal panic button.

- Internal review becomes mandatory: teams will need basic documentation for prompts, references, and intended use even for “just a pitch.”

New reality: “It’s only a concept” is no longer a safety blanket when the concept looks shippable.

Safety features can slow rollout

We’ve already seen ByteDance pause at least one Seedance-adjacent capability under pressure. A reported “face-to-voice” style feature was temporarily disabled after backlash, as covered here: Seedance 2.0 Face-to-Voice Paused After Privacy Backlash.

That’s not a side drama. It’s a signal that identity-adjacent features are becoming gated, throttled, or removed when they spread faster than safeguards.

What this means next

Seedance 2.0 is part of a clear pattern across generative video: models are becoming less “clip slot machines” and more like compressed production systems. The competitive edge is shifting from raw generation quality to:

- revision stability (can you change one thing without breaking everything?)

- reference discipline (can you keep a character and brand consistent?)

- workflow surfaces (can teams review, approve, and version cleanly?)

For creators and teams, the opportunity is real: faster drafts with higher hit rates means faster iteration, better pitches, and more content variants without ballooning production overhead.

But Seedance 2.0 also makes one thing obvious: when output looks real, the expectations become real too. The tool is raising the ceiling on what small teams can produce and raising the floor on the responsibility required to produce it without stepping on landmines.