OpenAI just introduced Frontier, a platform for deploying autonomous AI agents inside enterprise workflows. Think less “chatbot that helps” and more “digital coworker that actually runs the play.” The pitch is straightforward: connect agents to your company’s systems, give them scoped permissions, watch what they do, and continuously improve their performance with evaluation loops. For creator-led teams inside bigger orgs (marketing, brand, content ops, growth), this matters because it’s aimed at the unsexy part of scaling: reliable execution across messy tool stacks.

This isn’t a new model drop. It’s OpenAI trying to make agentic work operable with identity, access control, auditability, and a managed execution environment built for production use, not demo day.

The shift: AI isn’t being sold as “smarter answers.” It’s being sold as repeatable work with receipts.

What OpenAI shipped

Frontier is positioned as an enterprise platform where AI agents can plan and execute multi-step tasks across tools while staying inside guardrails your IT and security teams can tolerate.

OpenAI frames it around a few core building blocks:

- Business context integration so agents can pull from the same sources humans use (data warehouses, CRMs, internal apps), building something like durable “institutional memory.”

- Agent execution that supports multi-step work in real environments (not just responding to prompts).

- Continuous evaluation loops for measuring performance and iterating.

- Security and governance controls intended to make it possible to deploy agents without the “please don’t break anything” prayer circle.

There’s also a dedicated product page that spells out the enterprise positioning and controls more explicitly: OpenAI Frontier (business).

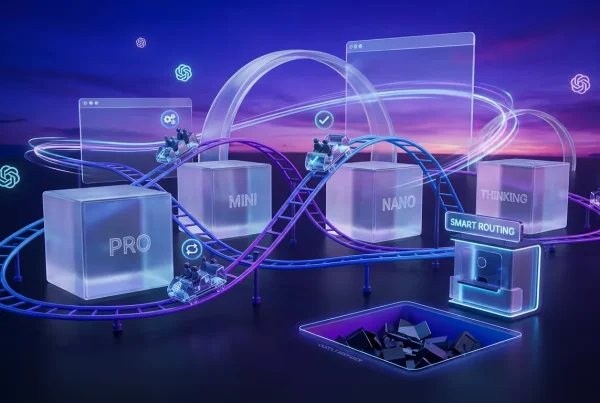

How agents actually work

The useful way to think about Frontier is as a managed layer between models and real work. Most teams already have some version of “AI in the workflow,” but it’s usually fragile:

- prompts in a doc

- a custom GPT someone made

- a Zapier chain held together by vibes

- a Slack bot that occasionally panics

Frontier is OpenAI formalizing the agent pattern: persistent identity + tool access + execution + oversight.

Persistent, not one-off

Frontier agents aren’t meant to be fire-and-forget prompt sessions. They’re designed to hold context across work, operate with a defined role, and execute steps across multiple systems without needing a human to re-explain the job every time.

Tool-connected by design

The big promise is agents that can operate inside the stack you already use: project management tools, content systems, analytics dashboards, internal knowledge bases, and whatever custom apps your org has duct-taped together over the last decade.

That’s the difference between “AI helps write” and “AI helps ship.”

Measured improvement loops

Frontier leans hard on evaluation: you don’t just deploy an agent and hope. You monitor outcomes, review failures, and use built-in evaluation and optimization loops to improve behavior over time. That matters for creative ops because “good enough” doesn’t cut it when the output is public-facing.

Governance that’s not cosplay

Agent autonomy is cool until it touches customer data, brand accounts, paid media budgets, or anything else that can ruin your day. Frontier’s enterprise posture is basically: you can’t scale agents without control surfaces.

From OpenAI’s Frontier materials, the platform emphasizes:

- Enterprise identity and access management and role-based controls

- Explicit permissions (least-privilege access instead of “sure, connect everything”)

- Auditable actions and governance controls

- Observability so teams can see what agents did and monitor behavior in production

There’s also a separate OpenAI capability that matters here: the Compliance API for Enterprise customers, designed to export ChatGPT Enterprise workspace logs and metadata for use in enterprise compliance tooling (for example, SIEM, DLP, and eDiscovery workflows). Reference: Compliance APIs for Enterprise Customers.

Why this matters for creators

If you work in content, brand, or marketing inside an enterprise, governance isn’t bureaucracy. It’s what determines whether your automation survives past the pilot.

Without logs and permissions, agentic tools get blocked. With them, they get adopted.

What changes for content teams

Frontier is marketed broadly to enterprise workflows, but the implications for content production are immediate, especially for teams that run on recurring cycles (launches, weekly reporting, multi-channel publishing).

Here are the practical places agent execution tends to land first:

Content ops orchestration

Agents can coordinate repeatable workflows like:

- intake → brief creation → asset checklist → routing → review reminders → final packaging

- enforcing templates and required fields

- nudging stakeholders when approvals stall

Not glamorous, but it’s where production velocity usually dies.

Multi-format repurposing

This is the “everyone wants it” use case: take a core asset and fan it out into variants. Frontier’s bet is that an agent can do more of the end-to-end chain reliably, drafting, formatting, scheduling, and summarizing performance because it can operate across tools, not just generate text.

QA and consistency checks

Brand-safe work is mostly about catching misses before they ship:

- wrong tone

- missing disclaimers

- outdated product specs

- broken links

- inconsistent naming

Frontier’s governance plus evaluation-loop approach is designed for this: you can define checks, watch outcomes, and tighten behavior.

The real win isn’t “AI writes.” It’s AI remembers the rules and applies them every time.

For more context on how OpenAI is pushing agents toward real deliverables, see OpenAI Trains Agents on Real Work Deliverables.

Frontier vs. “agents” already

Plenty of products call themselves agentic now. Frontier’s differentiation is less about buzzwords and more about packaging:

- Execution environment intended to be dependable in production

- Enterprise control plane (permissions, auditing, monitoring, governance)

- Continuous improvement as a first-class workflow, not an afterthought

If you’ve been following OpenAI’s earlier agent tooling pushes, Frontier feels like the enterprise-grade continuation of that direction.

Quick comparison table

| What enterprises need | What Frontier provides | Why creators should care |

|---|---|---|

| Controlled access | Scoped permissions and enterprise governance controls | Agents can touch real tools safely |

| Accountability | Auditable actions and monitoring | Fewer “who changed this?” mysteries |

| Repeatable quality | Evaluation and optimization loops | Less rework, more consistent output |

| Stack integration | Connections to enterprise systems | Automation moves beyond copy and paste |

What to watch next

Frontier’s launch is a signal that the agent race is moving to the boring (and profitable) part: operations.

A few near-term implications are worth tracking:

Agent UX becomes managerial

Teams will need people who can “manage agents” the way they manage freelancers: setting scope, reviewing outputs, tightening feedback loops, and monitoring performance. Not everyone has to become technical, but someone has to own the system.

Integration breadth will decide winners

The platform that plugs into the most real enterprise stacks securely wins. Raw model quality matters, but workflow coverage matters more when your goal is “done,” not “drafted.”

Creative leverage shifts upstream

When ops gets automated, the creative team’s advantage becomes taste, strategy, and decision-making because the “moving stuff around” layer gets cheaper and faster.

Bottom line

OpenAI Frontier is OpenAI saying: agents are ready to leave the lab and enter the org chart with governance, auditing, enterprise controls, and measurable improvement loops that enterprises require. For creator-led teams, that’s not about replacing creativity. It’s about removing the production friction that slows good ideas down.

If Frontier delivers on reliability (the hard part), it’ll change how content and marketing teams scale: fewer handoffs, tighter QA, faster cycles, and more energy spent where humans still win: taste, story, and judgment.