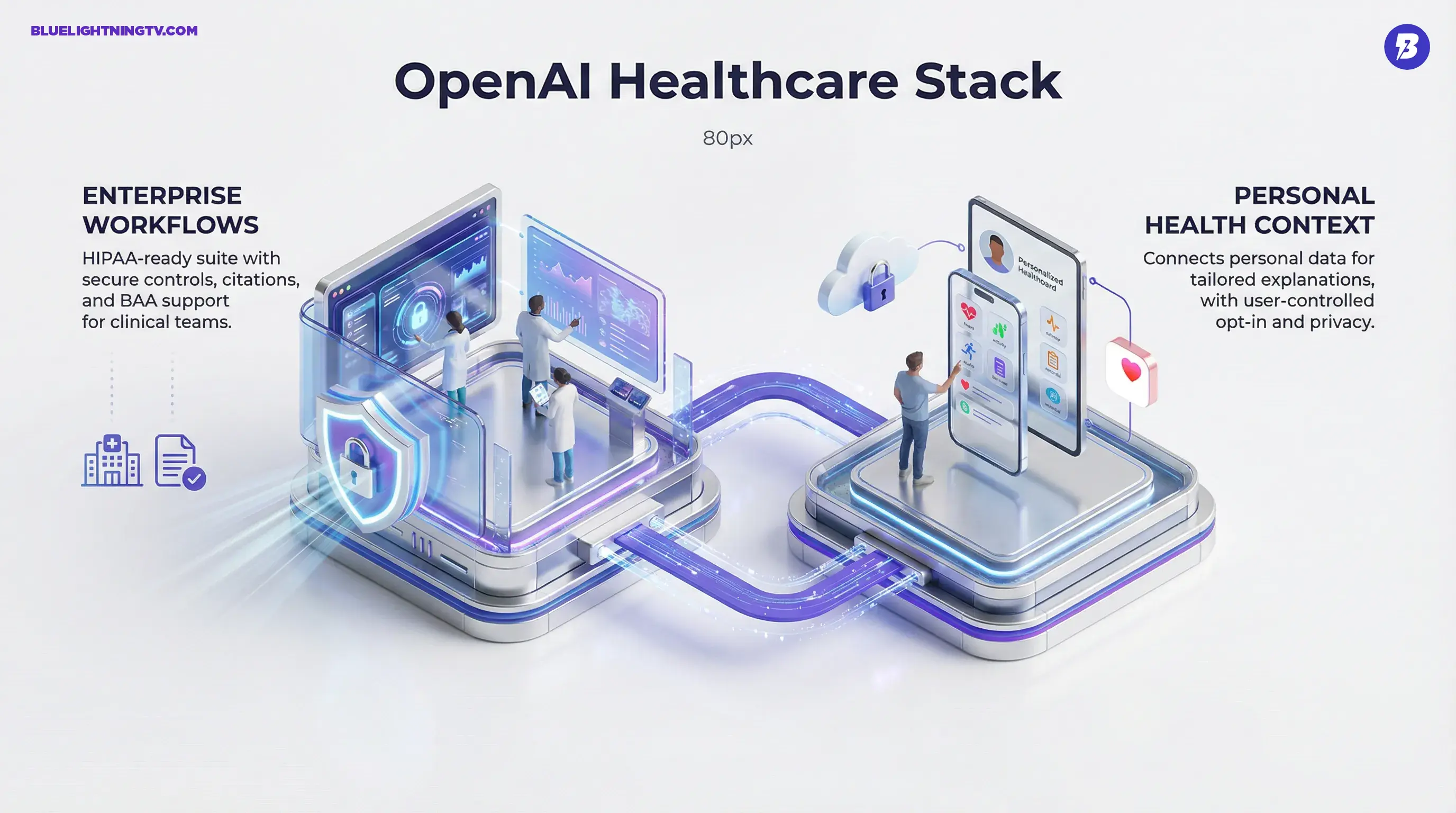

OpenAI is officially going vertical in medicine with OpenAI for Healthcare (announced January 8, 2026) a new suite anchored by ChatGPT for Healthcare (an enterprise offering designed for HIPAA-regulated environments, including BAA support for eligible customers) and ChatGPT Health (a dedicated place inside ChatGPT for health conversations that can connect to personal data, with opt-in controls). The headline is not “AI replaces doctors” (please, no). It is more practical: OpenAI is packaging models, security, and retrieval into a product shape hospitals can actually buy, deploy, and audit.

For creators and operators in health-tech, this matters because it signals a familiar pattern we have seen in media, design, and dev tools: general-purpose models do not “win” regulated industries until they are wrapped in workflow constraints, compliance controls, and integrations. OpenAI is now doing that wrapping loudly.

The real shift is not capability. It is deployability: when the tool is designed around what compliance teams and clinicians will tolerate, adoption stops being hypothetical.

What OpenAI shipped

Two products, two audiences

OpenAI’s healthcare push splits cleanly:

- ChatGPT Health: a consumer-facing health space inside ChatGPT, designed for health questions, records and lab explanations, and wellness context, with positioning that it is not a replacement for care. OpenAI says users can connect certain health data sources, including integrations like Apple Health and other supported apps (availability varies by platform, region, and rollout status).

- ChatGPT for Healthcare: an enterprise offering built for organizations handling PHI, with security controls and a “regulated workspace” posture (features that do not meet the bar are disabled by default), plus options OpenAI highlights like audit logs and enterprise identity.

OpenAI is also leaning on “grounded” outputs: evidence retrieval plus citations as a core part of the healthcare product positioning.

A compliance-shaped ChatGPT

A big part of this announcement is less “new model” and more “new container.” Healthcare does not want a clever chatbot. It wants:

- controlled access

- logging

- configurable retention

- clear data boundaries

- procurement-friendly terms

OpenAI is positioning ChatGPT for Healthcare as exactly that, including support for signing a BAA in HIPAA contexts for eligible customers (the difference between “cool demo” and “this can touch real workflows”).

What’s actually different

Citations as a product feature

Healthcare has been speedrunning the “LLMs hallucinate” discourse for two years, and the only useful outcome is this: verification has to be built into the interface. OpenAI is emphasizing evidence-backed responses with transparent citations in the healthcare suite, which changes how these tools get used. When citations are always present, the workflow becomes “draft plus verify” instead of “trust plus pray.”

That also sets a competitive bar for anyone selling LLMs into clinical settings: retrieval is not optional.

A regulated workspace approach

OpenAI points to a “regulated workspace” configuration: a safer default environment where risky features are off unless explicitly enabled. That is an underappreciated product move. In hospitals, the default has to be conservative because:

- staff turnover is real

- usage policies are hard to enforce manually

- “someone pasted PHI into the wrong box” is not a rare edge case

This is OpenAI acknowledging that product defaults are policy.

Personal data connections (carefully)

On the ChatGPT Health side, OpenAI is leaning into “bring your own context”: connecting personal health data (including Apple Health, where available) so answers can be tailored. That is the magic piece, but also the part that needs the most careful handling. The upside is obvious: fewer generic answers, more contextual explanations. The risk is also obvious: people treat contextual output as clinical instruction.

OpenAI’s framing tries to keep the tool on the “understand and prepare” side of the line, not “diagnose and treat.”

Who’s piloting it

OpenAI is not rolling this out as a self-serve free-for-all. The initial push is anchored by major healthcare organizations. OpenAI’s announcement and reporting around the launch cite early deployments and pilots with organizations including HCA Healthcare, Boston Children’s Hospital, Cedars-Sinai, and Stanford Medicine Children’s Health (alongside others such as AdventHealth, Baylor Scott and White Health, UCSF, and Memorial Sloan Kettering). Axios’ overview frames the launch as OpenAI moving deeper into clinical and patient-facing use cases, with institutions testing workflows rather than just models.

This is the enterprise playbook: land with big logos, learn where the bodies are buried (workflow-wise), then expand.

Where it hits workflows

Documentation and summarization

The most immediate value is still the least glamorous: paperwork. Clinicians spend an absurd amount of time on documentation, and “LLM as drafter” is the most proven pattern in real deployments. Expect the suite to get used for:

- drafting visit notes and after-visit summaries

- summarizing long histories into quick context

- turning messy narrative into structured language that teams can scan

This does not remove clinician responsibility. It removes the blank page.

Patient communications at scale

Hospitals send endless communications that must be accurate, consistent, and readable:

- lab result explanations

- prep instructions

- follow-ups and reminders

- “here’s what this means” messages that reduce call volume

ChatGPT for Healthcare is positioned to draft these quickly while keeping a citation-forward posture when medical explanation is involved.

Admin ops (quietly huge)

It is tempting to focus on clinical magic, but the operational side is where adoption becomes sticky:

- policy summaries

- internal SOP Q and A

- training materials

- prior authorization drafts (where permitted)

- call center knowledge assistance

These are lower-risk than diagnosis support, easier to measure, and often easier to approve internally.

What this means for buyers

It is not “HIPAA compliant,” it is “HIPAA-supporting”

This nuance matters: tools do not become compliant by branding. Implementations become compliant through configuration, contracts, controls, and training. OpenAI is clearly signaling the right components like BAAs (for eligible customers) and regulated workspace defaults, but healthcare orgs still need governance.

Here is the practical framing:

| Area | What OpenAI offers | What you still own |

|---|---|---|

| Data protection | Enterprise controls, audit-friendly features | Access policies, staff behavior, incident response |

| Clinical safety | Citations, evidence retrieval patterns | Review requirements, escalation rules, QA |

| Integration | Enterprise positioning for workflows | Connecting systems safely, validating outputs |

If you are a vendor building on this stack, the “how we handle PHI” story just became table stakes.

It sets a new expectation for enterprise GenAI

Healthcare is one of the strictest proving grounds for generative AI products. If OpenAI can make ChatGPT feel normal inside hospital security and compliance constraints, other regulated industries will borrow the blueprint: finance, insurance operations, legal workflows, and any environment where logs and retention policies matter more than vibes.

The limitations (the part everyone skips)

Accuracy still is not a checkbox

Citations help, but they do not guarantee correctness. Retrieval systems can surface the wrong source, or the model can misapply it. The product design nudges users toward verification, but the system still needs:

- clinician review

- clear “never do this” boundaries

- monitoring for repeated failure modes

In other words: citations reduce risk; they do not delete it.

Integrations are the bottleneck

Most “AI in healthcare” projects do not fail because the model is dumb. They fail because the organization cannot safely connect:

- EHR context

- message workflows

- document repositories

- identity and permissions

OpenAI’s suite is trying to smooth that path, but every hospital is its own snowflake. Expect uneven rollout speed.

Consumer health is a delicate zone

ChatGPT Health will be heavily judged by how it handles:

- uncertainty

- “should I seek care?” moments

- sensitive topics

- overconfident tone

OpenAI can say “not medical advice” all day. The UX has to reinforce it in practice.

Why this launch matters now

OpenAI for Healthcare is a signal that the generative AI era is moving from “model access” to “industry-ready packaging.” The healthcare world does not need more demos. It needs tools that can survive:

- compliance review

- procurement

- integration

- clinical reality (aka: chaos)

OpenAI’s bet is that a regulated ChatGPT with citations, guardrails, and enterprise controls can become the default interface layer for a lot of hospital knowledge work, even if the actual “doctoring” stays firmly human.

And honestly? That is the right target. The fastest wins in healthcare are not sci-fi diagnostics. They are getting smart people out of the documentation dungeon and back to patient care without turning privacy and safety into an afterthought.