Kuaishou’s Kling AI has rolled out its 2.1 model series, advancing text-to-video generation with upgrades centered on motion realism, visual coherence, and tighter control. Headlining the release is Start/End Frames, a dual-frame conditioning capability that lets creators explicitly define the first and last frames of a clip, alongside new Shot Control options and extended clip durations of up to 10 seconds.

What’s new in Kling AI 2.1

The 2.1 series broadens creative control while aiming to minimize post-production cleanup. According to Kuaishou’s latest disclosures, the model now supports:

- Start/End Frames: First and last frame conditioning to land scenes on a precise endpoint and improve continuity across beats and transitions.

- Shot Control: Expanded controls over camera motion and shot dynamics for more predictable, cinematic move sets.

- Longer Clips: Up to 10-second text-to-video outputs, reflecting a push for richer sequences in a single pass.

Kuaishou positions the update as part of a broader AI push across its content ecosystem, with Kling at the core of motion quality advances and editorially useful controls. The company’s investor communications outline the feature set, including shot mechanics and the extended duration window for ordinary users. Read the announcement.

Bottom line: Deterministic endpoints via Start/End Frames bring an editor’s intent into the model loop, reducing reshoots and hero-frame hunting for product beats, transitions, and title cards.

Start/End Frames: from intent to delivery

Dual-frame conditioning is the standout. By pinning both the opening and closing frames, creators can guide content to a specific finale. This is useful for product reveals, narrative beats that must resolve on a particular composition, or interstitials that need to hit a frame-accurate handoff. Developer documentation and integrator summaries note that Kling’s implementations of first- and last-frame control enable stronger continuity, and even loop-like effects when the same image is used at both ends. Reference overview.

Performance and model design notes

While Kuaishou has emphasized creator-facing features, model descriptions circulating in the developer ecosystem point to technical changes aimed at motion plausibility and temporal stability. Summaries cite a 3D spatio-temporal joint attention approach underpinning improved physical simulation, smoother trajectories, and more lifelike expressions. These traits show up most clearly in complex camera moves and multi-object interactions.

Kling 2.1 at a glance

| Capability | What’s in 2.1 | Notes |

|---|---|---|

| Clip duration | Up to 10 seconds | Extended for mainstream use cases |

| Resolution modes | 720p (Standard), 1080p (High Quality) | Targets social and editorial needs |

| Frame conditioning | First and Last frame control | Enables precise end-frame delivery and loops |

| Shot control | Camera movement and angle guidance | More predictable cinematics |

| Motion engine | 3D spatio-temporal attention (reported) | Aims at smoother, more physical motion |

| Indicative cost & speed | 5s 720p: 20 pts; 5s 1080p: 35 pts; HQ renders under 1 min | Per recent market coverage of Kling’s credits and latency |

For context on pricing and latency, Hong Kong market coverage recently detailed a credit-based model (Inspiration Points) with 5-second videos priced at 20 points in standard 720p and 35 points in 1080p high quality, with high-quality renders typically completing in under a minute. The same coverage framed Kling’s 10-second outputs as the longest duration currently accessible to ordinary users among mainstream offerings.

Why dual-frame control matters now

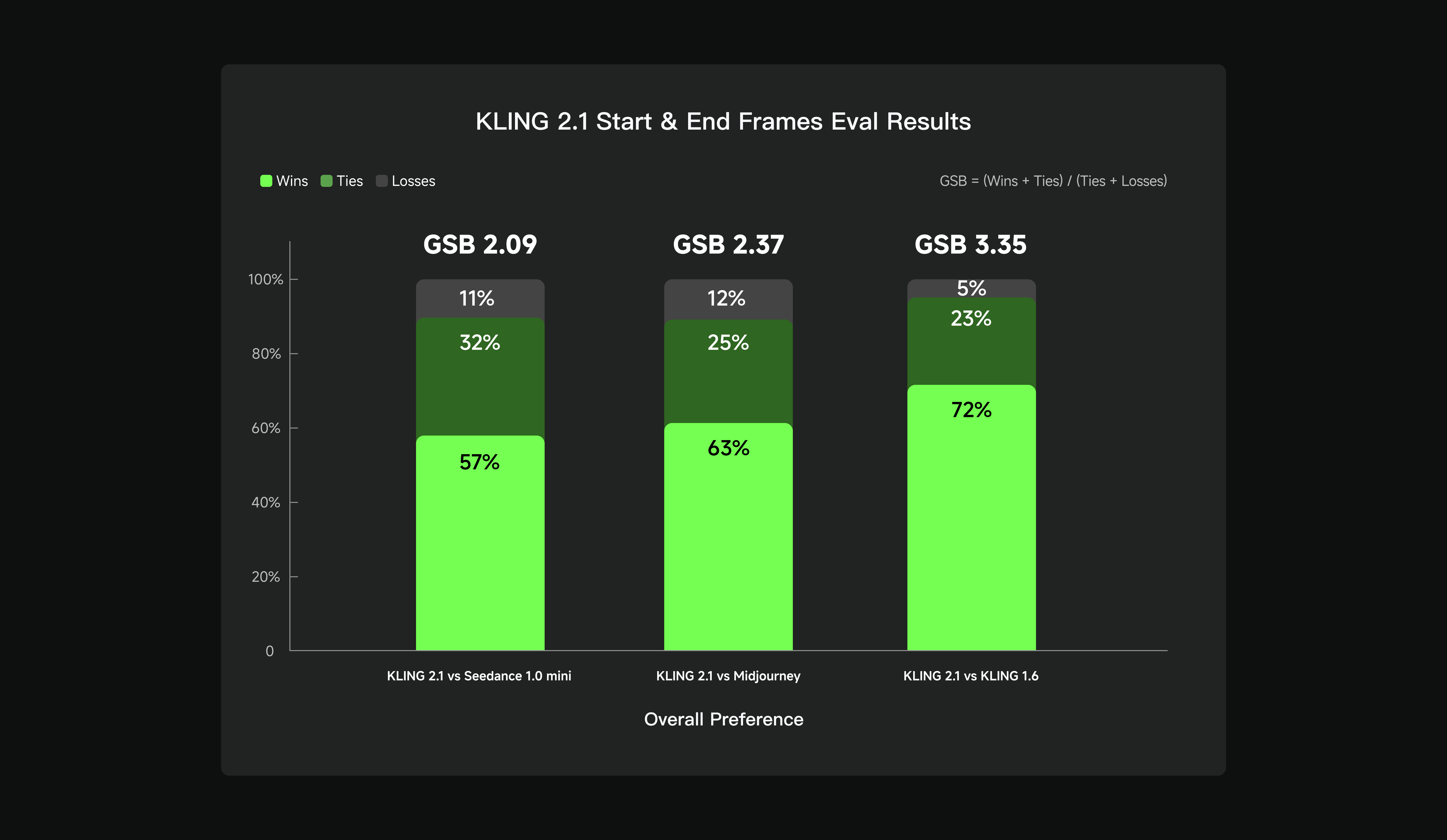

As AI video shifts from novelty to production utility, deterministic endpoints and camera predictability are becoming table stakes. Kling’s Start/End Frames are noteworthy because they reduce downstream fixes, stabilization, surgical cuts to salvage a single frame, or manual re-timing to hit graphic cues, by moving that precision upstream into generation. The bar chart above reflects Kling’s internal evaluation on Start/End Frames behavior, positioned as a wins, ties, and losses snapshot of how often the model lands requested start and end conditions without visible artifacts or drift.

Context: Duration, determinism, and shot control form this season’s competitive axis for AI video. Kling’s 2.1 release clearly stakes ground on all three.

Positioning within the AI video landscape

Across the field, core goals are converging: keep motion credible, respect prompts, and deliver edit-ready outputs that slot into existing timelines. Kling’s 10-second ceiling and dual-frame control arrive as other players push open-source accessibility and research transparency. For instance, Genmo’s Mochi 1 takes an open-weight, research-forward approach with shorter 480p clips built for motion plausibility and reproducibility, an instructive counterpoint on scope and philosophy.

Kling’s path instead emphasizes production-grade controls inside a consumer-accessible platform. The focus on shot mechanics and end-frame determinism signals a bid to shrink the distance between AI drafts and editorially usable scenes, particularly important for product shots, promo transitions, and social edits that require precise landings.

Availability and ecosystem signals

Per Kuaishou’s investor communications, Kling 2.1 is part of a broader strategy to embed AI across content creation and monetization surfaces, with a feature cadence that brings creator-facing controls forward at the platform level. The update cadence suggests Kuaishou is optimizing for day-to-day usability, including length, speed, and determinism.

The takeaway

Kling 2.1 consolidates recent advances into an editor-friendly package: Start/End Frames for deterministic outcomes, Shot Control for predictable cinematics, and 10-second sequences for richer stories in one go. While architectural specifics surface through integrator documentation, the creator-facing net effect is clear: fewer retries to hit a key frame, less stabilization and patchwork in the NLE, and more confidence that a prompt will resolve exactly where it needs to, at speed and at the resolutions most social and product workflows demand.