NVIDIA just pushed the “one image to whole world” idea into a much more creator-usable place with Lyra 2.0, a research release that generates persistent, explorable 3D environments from a single photo, then exports those worlds as 3D Gaussian splats and meshes you can actually move downstream. The code is also out in the open via nv-tlabs/lyra on GitHub, which is a meaningful difference from a lot of “cool demo, no pipeline” world gen.

Lyra 2.0 is not trying to be “text to video but longer.” It is closer to: seed a space, explore it with camera motion, keep it coherent when you look back, and output something that resembles real 3D data. In practical terms, it is a new on ramp for environment ideation and previs, especially for teams who already live in Unreal, Unity, and Blender land and are tired of AI outputs that end at an MP4.

What Lyra 2.0 ships

Lyra 2.0 takes a single input image, with optional text prompting per NVIDIA’s materials, and generates a walkthrough along user driven camera motion. From there, it reconstructs the scene into 3D representations that can be used outside the model’s sandbox.

The headline claim is less “it looks nice” and more it stays consistent as you move around. If you have ever tried to push a generative model past a short clip, you know the failure mode: the alley you just walked through becomes a different alley the moment you turn around. Lyra 2.0 is explicitly built to fight that, targeting long horizon consistency by addressing spatial forgetting and temporal drift.

The real story: AI environments are graduating from “pretty drift” into “state that holds up,” which is the difference between a fun render and something you can build on.

The output formats matter

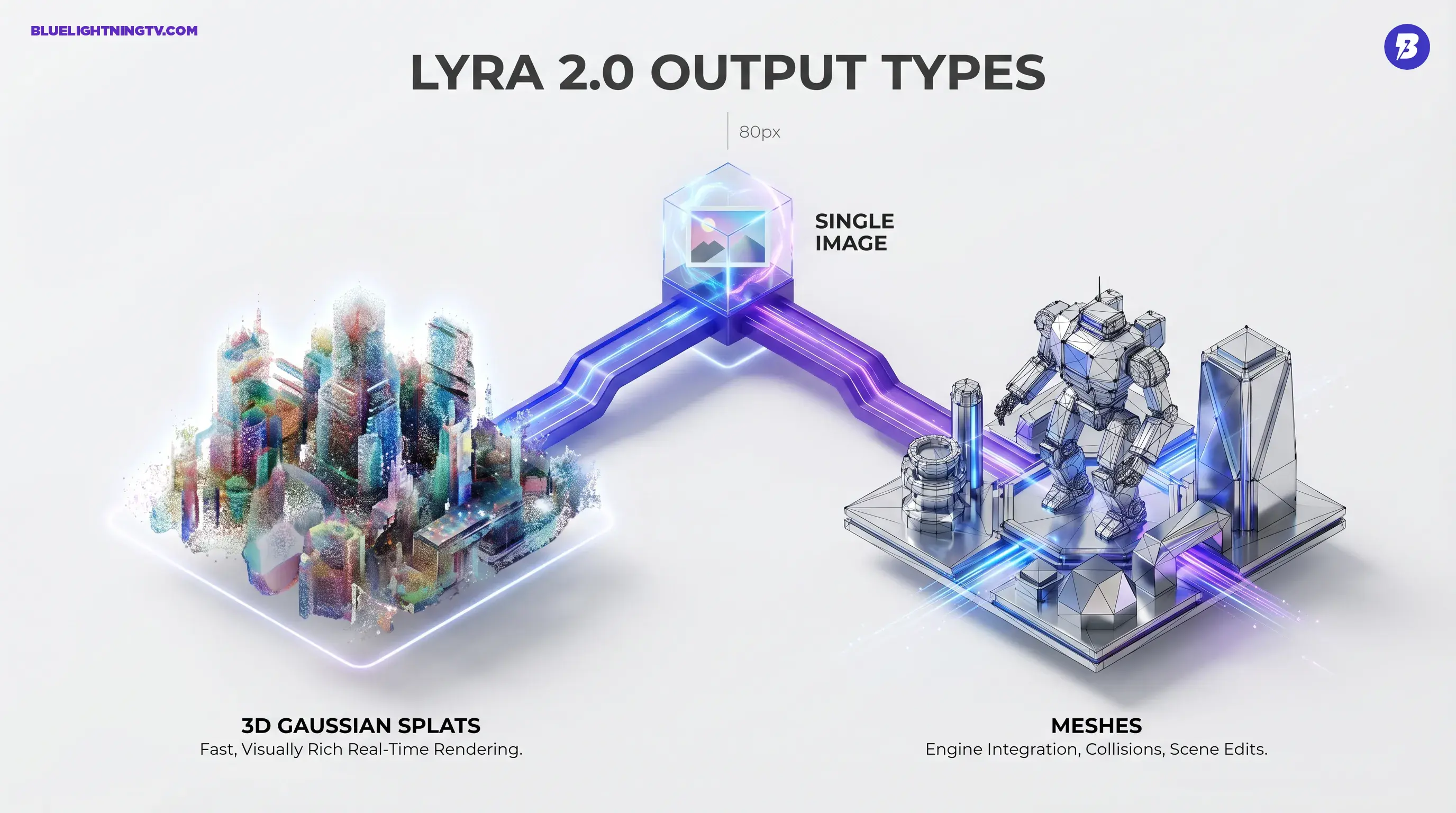

Lyra 2.0 does not only render a viewpoint. It produces exports intended for other tools:

- 3D Gaussian splats (3DGS), commonly exported as .ply, for fast, visually rich real time rendering

- Meshes extracted from the reconstructed scene for workflows that need geometry (collisions, edits, engine integration)

That “and” is doing a lot of work. Splats can look great, but production pipelines still ask for meshes the minute you need physics, clean occlusion, or actual editability.

Why persistence is the point

Most creator facing “world” demos look impressive until you do anything normal: long moves, reversals, or revisiting a location. Lyra 2.0 is positioned around long horizon consistency, which is NVIDIA speak for “the model does not forget what it already showed you.”

In plain creator terms, Lyra is aiming for:

- Spatial consistency: the layout does not reshuffle when you pan or backtrack

- Temporal stability: errors do not snowball over a longer trajectory

That second one is sneaky important. Drift is what makes teams stop trusting AI output. You cannot storyboard in a world that re rolls its own reality mid shot.

Under the hood, lightly

NVIDIA’s project write up frames Lyra 2.0 as addressing two core issues: spatial forgetting and temporal drifting. The system keeps structured history of the scene as it generates, rather than treating each new view like a brand new hallucination.

If you want the more formal details, the associated paper is here: arXiv: 2604.13036.

What creators can do with it

Let’s keep this grounded: Lyra 2.0 is not a replacement for environment artists, and it is not a one click “ship a level” button. But it is a powerful accelerant for the parts of production that usually crawl: ideation, blocking, and fast iteration.

Previz that does not collapse

If you are doing commercials, branded content, or narrative pitches, you often need an environment that holds up across multiple angles, even if it is not final quality topology. Lyra 2.0’s revisitable framing is aimed right at that.

- Turn a mood frame into something you can scout

- Try multiple camera passes without regenerating from scratch

- Export out for additional lighting, blocking, or comps

World building for prototypes

For game teams and interactive studios, the speed win is obvious: start from a single concept image and generate a space you can explore, then export the representation for engine side experimentation.

The catch: exported assets still need cleanup to become production ready. But as a starting block, this is a different class of output than “here is a six second clip.”

Marketing environments and flythroughs

Marketing teams increasingly want spatial content, not just a hero image, but a space that can support multiple cuts, vertical crops, and walkthrough beats. Lyra 2.0’s approach fits that need: one approved visual direction becomes many angles.

Export reality check

Export is the promise, and also where reality shows up with a clipboard.

Here is the pragmatic view of each output type:

| Export type | Best for | Watchouts |

|---|---|---|

| 3D Gaussian splats | Fast, high detail real time renders | Can be heavy; editing is not the same as editing clean geometry |

| Meshes | Engine integration, collisions, scene edits | Reconstruction can be rough; expect cleanup and retopo for hero use |

| Walkthrough video | Quick review, pitch material | Still a render, not inherently editable |

This is why Lyra’s dual output approach matters. Splats give you the look; meshes give you the pipeline hooks.

Limitations to plan around

Lyra 2.0 is exciting, but it is not magic. A few constraints show up consistently in this category of tool.

Static worlds, mostly

Lyra 2.0 is about environments, not dynamic scenes full of characters doing reliable, editable actions. If your use case depends on moving people, complex interactions, or shot to shot character continuity, this is not that tool.

Resolution and polish ceilings

Lyra 2.0’s released materials describe modest working resolutions for the image conditioned generation pipeline, which is fine for previs and prototyping but not the same as final pixel. You can absolutely use this to make decisions faster, but you are still going to finish in your actual production stack.

Cleanup is still part of the job

Exportable does not mean perfect. Expect the normal set of AI to 3D chores:

- geometry artifacts in occluded regions

- inconsistent fine detail when pushing far from the seed image

- mesh extraction that needs work if you want animation friendly topology

Lyra 2.0 reduces the “blank scene” problem. It does not eliminate the “make it shippable” part.

How this fits the bigger shift

We have been watching generative tools evolve from outputs into workspaces. Lyra 2.0 is part of that same movement, but for 3D: worlds as state, not clips as exports.

It also lands in a moment where multiple players are trying to make world models real. The difference is that Lyra is leaning hard into pipeline interoperability and open release, which tends to matter more than flashy demos once teams try to operationalize this stuff.

For a related take on interactive world models and persistence, see our coverage of Project Genie: Google Turns Prompts Into 3D Worlds.

What to watch next

If Lyra 2.0 is the serious prototype, the next creator critical questions are straightforward:

- How clean are exports in real pipelines? Unity, Unreal, and Blender workflows do not forgive messy geometry.

- How controllable is layout, not just style? Art direction is more than “make it cyberpunk.”

- Does persistence survive longer sessions? A world that holds for 10 seconds is not a world.

- Do we get better edit handles? Layered scene elements, segmentation, material maps, things creators actually tweak.

Lyra 2.0 does not answer all of that yet. But it very clearly pushes the category forward, from “AI can imagine a place” to “AI can give you a place you can keep working with.”