OpenAI’s Model Spec is the closest thing the company has to a public operating manual for how ChatGPT class models behave. If you publish at scale, run brand safe automations, or build tools on the API, the Spec is not abstract policy. It is the logic that decides what the model will do when prompts get messy. Start with the primary source: OpenAI Model Spec (public version: 2025-09-12).

One quick cleanup before we get into it: a lot of drafts about this topic reference a specific March 2026 explainer page from OpenAI. The most canonical public references for how this works are the Model Spec itself and OpenAI’s posts explaining why it exists and how it evolves.

What the Spec is

OpenAI’s Model Spec is a public document describing how models should behave across scenarios: following instructions, handling conflicts, staying safe, and communicating clearly. Think of it as the behavioral contract between:

- You (the user asking for work)

- A developer (the team integrating the model into a product or workflow)

- OpenAI (the platform setting non negotiable constraints)

If you have ever had a model suddenly refuse something it used to do, or follow a user request that should have been blocked by brand rules, the Spec is the framework designed to make those outcomes less random and more predictable over time. For background on why OpenAI published it publicly, see Introducing the Model Spec.

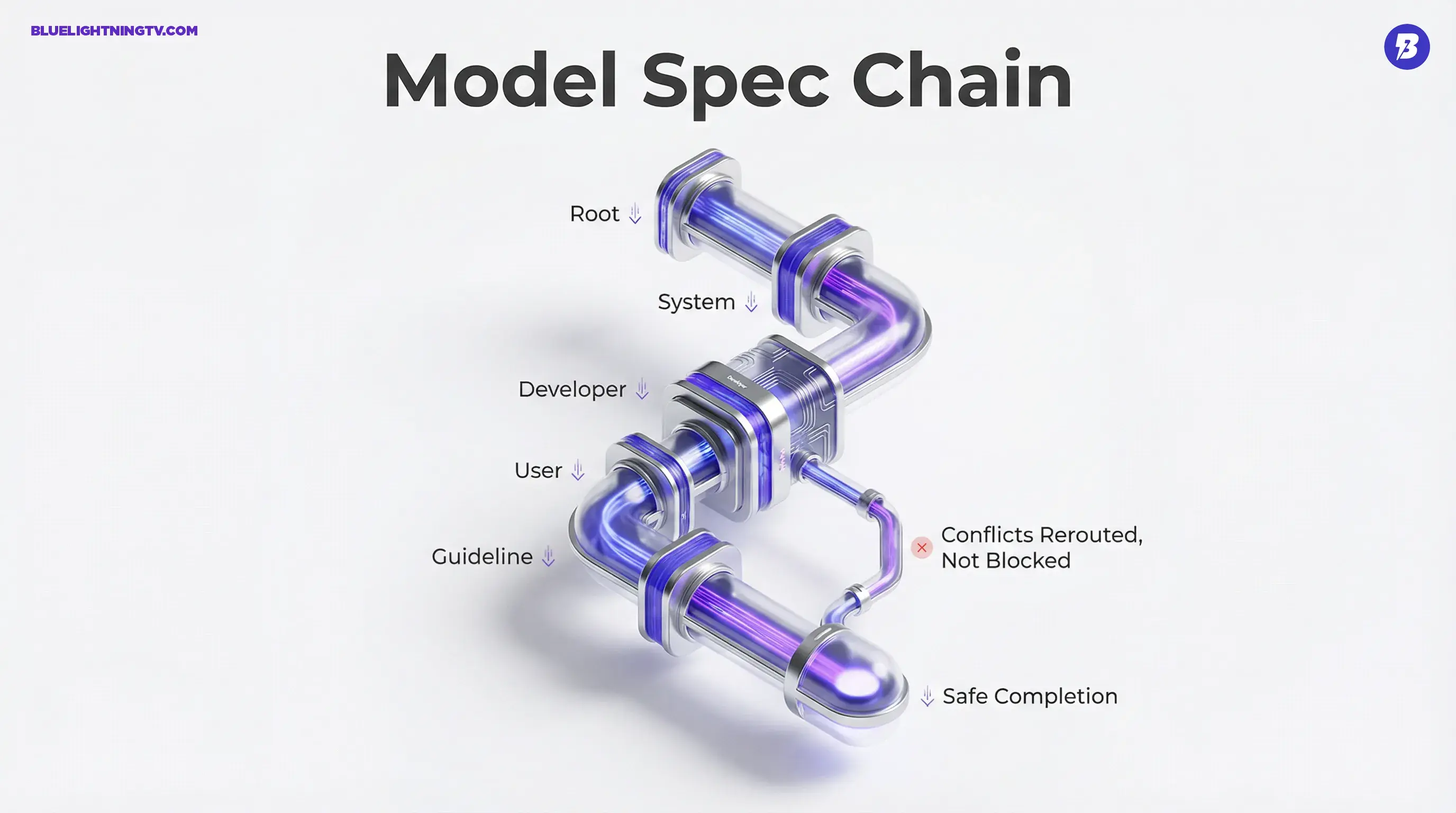

Chain of command

The most creator relevant part is the instruction hierarchy, meaning who the model listens to when instructions conflict. The public Model Spec establishes a chain of command with these authority levels:

Root → System → Developer → User → Guideline

That hierarchy is what determines whether your brand rules win over a spicy user prompt and whether the model can be helpful without stepping outside hard boundaries.

The five authority levels

Here is the hierarchy in plain terms, with what it means in real content workflows.

| Level | Who sets it | What it controls |

|---|---|---|

| Root | OpenAI | Non overridable rules (highest authority) |

| System | OpenAI or product | Global behavior constraints and defaults |

| Developer | App or API builder | Brand rules, formatting, workflow goals |

| User | End user | The prompt (what they want right now) |

| Guideline | Default behavior | Style norms, helpfulness behaviors |

The biggest operational implication: if you are building with the API, your developer instructions are supposed to outrank the user. That is how you make on brand mean something more durable than please keep it friendly.

Safe completion replaces dead ends

Creators do not just need safety. They need continuity. A hard refusal is a workflow break: the pipeline stops, a human has to re prompt, and now you are late.

OpenAI has described a shift toward safe completions, where models aim to provide the most helpful safe output possible instead of defaulting to hard refusals. OpenAI described this approach here: From hard refusals to safe completions: toward output centric safety training.

What this looks like in practice

Instead of:

- I cannot help with that.

You increasingly get:

- I cannot do that part, but here is what I can do safely, plus constraints, alternatives, or a toned down version of the request.

That is good news for production teams because it turns a no into a reroute. It also means you should expect more partial answers, which can be useful but may require tighter review if your team is used to binary refuse or comply behavior.

Brand voice: where it actually lives

Consistent voice is the thing everyone wants and few people operationalize. The Spec’s chain of command implies a best practice for creator teams:

- Put your voice plus compliance rules in developer level instructions (or equivalent scaffolding in your tool).

- Do not rely on repeating write in our voice in every user prompt.

If your workflow is user prompt only, your brand constraints are competing in the same authority layer as make it edgier or ignore that rule. The Spec is designed so user level requests should not override developer level boundaries.

A common failure mode

When teams say the model forgot our tone, what they often mean is:

- The tone was provided as a low authority reminder

- The user asked for something that pulled in a different direction

- The model did exactly what the hierarchy allowed

Not malicious. Not broken. Just following the chain of command you implicitly set.

Agentic behavior is the real subtext

The Spec is not only about chat. It is built for a world where models take multi step actions: calling tools, operating UIs, coordinating tasks, and making decisions that have side effects.

That matters because creators are increasingly using LLMs as operators, not just writers:

- repurposing a long video into 30 platform native assets

- pulling analytics, summarizing performance, drafting next week direction

- managing asset naming, metadata, and upload checklists

- running multi step formatting and QA passes before publishing

In those workflows, instruction conflicts are constant. The Spec’s hierarchy exists largely to prevent agent energy from turning into agent chaos.

For OpenAI’s broader framing of how the Spec evolves, and what values it tries to encode, see Sharing the latest Model Spec.

What changes for creators

This is the part that hits your calendar, not your curiosity.

1) Your outputs can shift without new features

If OpenAI updates how the Spec is interpreted or trained into behavior, you can see differences in:

- sensitivity around gray areas

- how strongly developer rules are enforced

- whether the model asks clarifying questions vs guessing

- how often it safe completes vs refuses

Treat behavior changes like production changes. If you run repeatable content systems, periodically regression test the prompts you depend on, especially around claims, health, finance, or anything with brand risk.

2) Model choice becomes governance

As more teams route work across multiple models, fast and cheap for drafts, deeper for final passes, the Spec becomes the baseline for consistent behavior across routing.

You do not want:

- Model A follows your style guide

- Model B ignores it

- Model C refuses everything for no reason

Publishing a Spec is meant to reduce that three different personalities in one pipeline feeling. In practice, teams still need to test. Specs are promises. Production is reality.

3) More leverage for developers

If you are a creator who also builds tools, the Spec is basically giving you permission to be stricter at the developer layer:

- enforce formatting

- block disallowed topics

- require citations or structured fields

- constrain tone and claims

Because developer instructions outrank user prompts, your system is less likely to get yanked off the rails by a last minute make it controversial request from a stakeholder who just discovered engagement metrics.

Quick reality check

The Model Spec is not a magic shield.

- It improves predictability, not perfection.

- Safe completions can still produce output you should not publish without review.

- The hierarchy helps, but your implementation still matters, meaning where you place rules, how explicit they are, and whether you test edge cases.

The Spec does not replace editorial judgment. It makes judgment easier to apply at scale.

Bottom line

OpenAI’s Model Spec is the hidden infrastructure behind why the model answered that way. For creators, its biggest value is practical: it formalizes a chain of command that makes brand constraints, safety boundaries, and agentic workflows more predictable, especially when prompts conflict, deadlines hit, and someone asks the model to do something slightly unhinged.