ByteDance has temporarily disabled a Seedance 2.0 capability that could generate a personal sounding synthetic voice from a single face photo, a mode some users saw labeled as Human Reference. Reporting from TechNode describes the feature as turning facial photos into personal voices and notes ByteDance pulled it after concerns about potential misuse escalated quickly.

The broader Seedance 2.0 stack remains in testing and available only in limited rollout through ByteDance’s apps and creator ecosystem, but this specific face to voice path is now paused while the company retools safeguards.

Read the coverage here: TechNode’s report on the suspension.

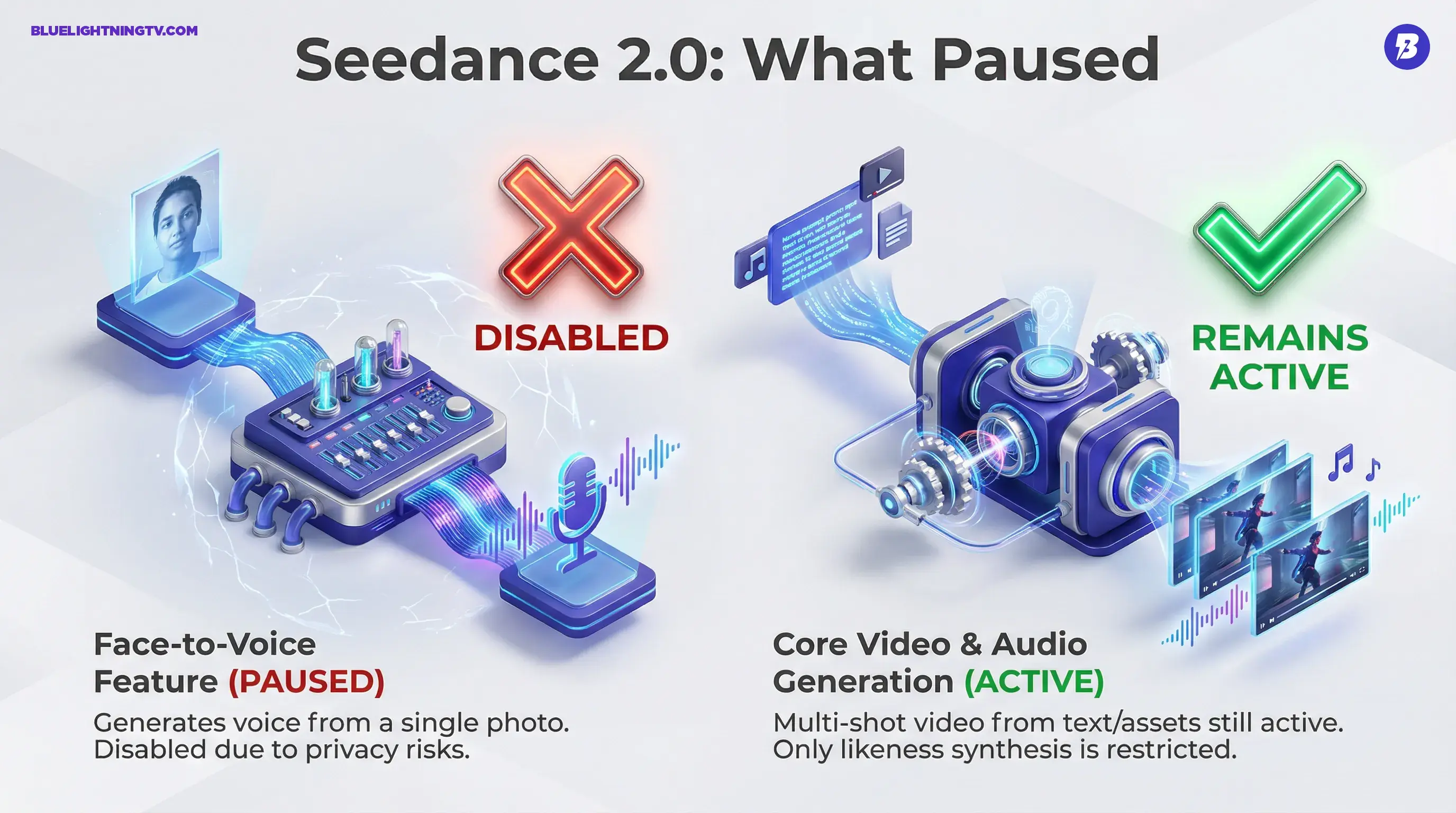

What got paused

Human Reference explained

Seedance 2.0’s controversial trick was not voice cloning in the traditional sense where you feed the model audio. It was closer to voice inference: upload a photo, and the system could output a voice that, in reported tests, could sound very close to the person in the image without requiring an audio sample.

That missing audio requirement is what set off alarms. A single image is plentiful and easy to grab. Audio is usually harder to obtain or at least harder to pretend you got consensually. Removing the audio step is what made this feel like a new tier of risk.

When a tool can translate a face into a voice, identity stops being a single medium problem. It becomes a pipeline problem.

What Seedance 2.0 is

More than video gen

The face to voice headline is grabbing attention, but Seedance 2.0 sits inside a bigger shift: AI video tools are trying to become end to end production systems, not just make a clip from a prompt.

In recent coverage and platform descriptions, Seedance 2.0 is positioned around:

- Multimodal inputs, including text plus reference files

- Multi shot generation, more scene sequence than one off snippet

- Native audio alongside visuals, including speech and background sound beds

- Improved lip sync and facial performance consistency

CGTN framed the model as a step toward digital director style tooling, emphasizing multi shot control and a tighter loop between script and output. CGTN’s overview leans into that narrative.

Why this crossed a line

The missing consent step

In generative media, the consent friction often lives in the inputs. If a system needs 30 seconds of your voice, there is at least a moment where you could notice something is off, or platforms can more plausibly enforce do not upload other people’s recordings.

A face photo is different. Faces are everywhere: profiles, press shots, thumbnails, screenshots, and endless reposts.

So the privacy concern is not abstract. It is operational: the barrier to impersonation drops when the model does not need a voice sample to output a voice that resembles you.

Audio and video now ship together

The broader context is the video with audio wave. When a system generates speech and visuals together, the usual safety approach of moderating audio and video separately gets harder.

You are no longer dealing with isolated artifacts. You are dealing with a single fused output that is already persuasive.

What still works

Seedance without face to voice

ByteDance is not pulling Seedance 2.0 wholesale. Based on reporting, the suspended portion is the face photo to personal voice feature and related real person reference usage. The rest of the creation pipeline stays usable for creators who have access.

Practically, that means most teams can still:

- Generate video from text prompts and supported reference assets

- Use stock or system voices rather than inferred personal voices

- Produce multi shot sequences with consistent character styling

- Keep experimenting with rapid concepting for ads, shorts, and social first stories

| Workflow piece | Before pause | Now |

|---|---|---|

| Video generation | Available in limited rollout | Available in limited rollout |

| Audio generation | Available in limited rollout | Available in limited rollout |

| Voice from face photo | Available | Disabled |

What ByteDance may add next

Verification gets real

TechNode reports ByteDance moved to suspend real person image or video references and introduced additional verification steps in its apps tied to avatar creation, pointing toward stricter protections before anything like this returns.

In the broader industry, that usually means some mix of:

- Liveness checks to prove you are a live person

- Identity verification to prove you are the subject or authorized

- Permissioned reference libraries of licensed or consented faces and voices

- Harder gating for vetted accounts only

Creators should read this as product reality, not a moral lecture: higher power likeness features are trending toward gated access because open access plus real person synthesis is a combination that tends to explode.

The real takeaway

Convergence is the story

The most important development is not the pause itself. It is what the paused feature implies: generative tools are collapsing boundaries between modalities.

Face to voice to performance to video is the direction. Each time a tool removes an input requirement, no audio needed, no training needed, no manual rigging needed, it boosts creative velocity while raising the stakes of misuse.

Seedance 2.0 still matters because it is chasing what every serious gen video platform is chasing: coherent multi shot, audio synced output that is usable without a post production rescue mission. ByteDance just learned publicly that if you bundle identity inference into that stack, the rollout cannot be casual.