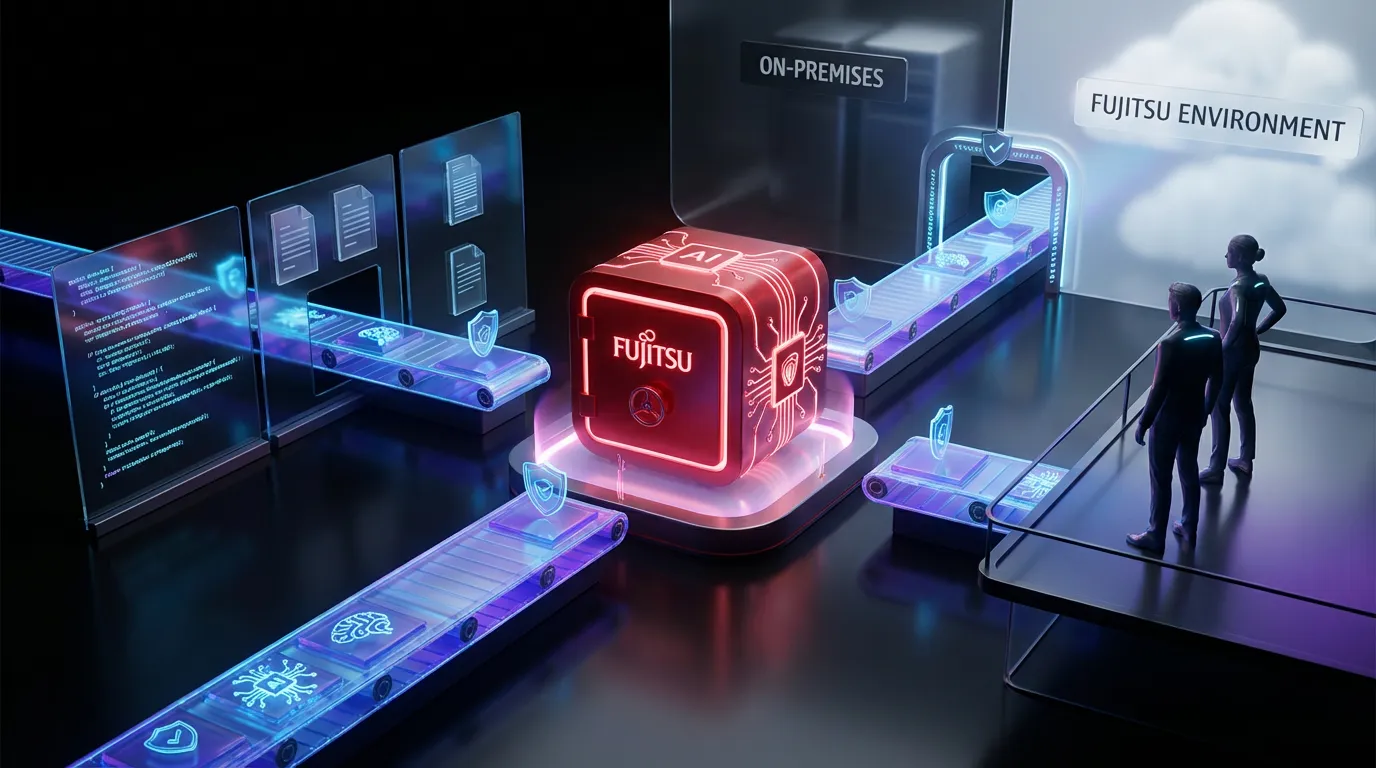

Fujitsu just rolled out its Kozuchi Enterprise AI Factory, a new platform aimed squarely at enterprises that want private, customizable GPT-style systems but do not want their data, prompts, or internal workflows taking a field trip through a public SaaS stack. Fujitsu positions Kozuchi as a “dedicated environment” option: run it on-premises or in a Fujitsu-provided dedicated environment, with tooling intended to manage the generative AI lifecycle end to end. The official release details are here: Fujitsu press release.

If you have been watching enterprises wrestle with genAI, this launch lands in the most predictable place possible: companies want the power of LLMs, but not the hope-and-pray governance model that comes with duct-taping policies onto a general-purpose API. Kozuchi is Fujitsu’s attempt to productize what a lot of big orgs are already building internally: a private LLM platform with safety rails, auditability, and operational controls that security teams can live with.

The vibe here is not “AI will change everything.” It is “we need genAI in production, and our risk team will absolutely block it unless it is contained, monitored, and provable.”

What Fujitsu launched

Kozuchi Enterprise AI Factory is positioned as a full-stack platform for building and operating generative AI inside a dedicated environment. Fujitsu’s framing is explicit: enterprises can “autonomously manage” the lifecycle across development, operations, and continuous improvement (including model customization and optimization) while keeping sensitive data in a controlled environment.

At a practical level, the platform bundles several categories of capabilities:

- Dedicated environment choices (customer site or Fujitsu-provided environment)

- Lifecycle management for models and agents

- Safety and trust tooling (vulnerability scanning plus guardrails)

- Model customization including in-house fine-tuning

- Optimization via lightweighting and quantization to reduce resource use

Fujitsu also anchors the platform around “Takane,” its enterprise-focused Japanese language model developed via its partnership with Cohere (background on that partnership: Fujitsu and Cohere announcement). The important point: Kozuchi is not just bring-your-own-model infrastructure. Fujitsu wants to sell a packaged enterprise AI stack where model, platform, and controls arrive together.

Why it matters now

The enterprise genAI conversation has shifted. The early wave was: “Can we get a chatbot?” The current wave is: “Can we put LLMs into workflows that touch customer data, financial logic, regulated documentation, or internal IP without creating a security incident we have to explain for the next two quarters?”

Kozuchi’s timing makes sense because three things are converging:

- Private genAI is becoming the default ask in regulated or IP-heavy orgs

- Agentic workflows are moving from demos to pilots, increasing blast radius

- Security teams are demanding evidence logs, policies, monitoring, repeatable evaluations rather than promises

Fujitsu is targeting the buyer who says: “We are not anti-AI. We are anti-unknowns.”

Inside the stack

Fujitsu is essentially proposing a control plane for private GPT operations. Not just “host a model,” but run the full machine: deploy, monitor, govern, improve, and defend.

Deployment options

Kozuchi supports two main patterns:

- On-premises installation where traffic and data are kept within the organization

- Fujitsu-provided dedicated environment for teams that want isolation without owning the hardware lifecycle

This matters because “private” means different things across industries. Some orgs need physical control; others need contractual and architectural isolation. Kozuchi is trying to cover both without forcing a cloud-first dependency.

Lifecycle control

Where a lot of enterprise LLM rollouts get messy is versioning and change control. The moment you have multiple departments spinning up agents, prompt templates, fine-tunes, and retrieval sources, you need:

- Repeatable deployment workflows

- Visibility into what changed and who approved it

- Rollback mechanics when something breaks or fails evaluation

- Operational monitoring that is more than “it seems fine”

Fujitsu’s pitch is that Kozuchi centralizes that lifecycle so AI is not managed like a side project.

Safety without theater

The headline number in Fujitsu’s release is hard to miss: a vulnerability scanner capable of detecting over 7,700 vulnerability types, including Fujitsu-defined ones, paired with guardrail technologies designed to catch prompt injection and other malicious behavior. Fujitsu describes guardrails that operate before execution and during runtime, plus automated rule generation and application to guardrails based on issues detected by scanning.

Here is the key distinction: Fujitsu is not describing safety as “we filter bad words.” They are describing it as operational security, an ongoing process that includes scanning, detection, enforcement, and iteration.

What “7,700 checks” signals

A big vulnerability catalog does not automatically equal better security, but it does signal two important things:

- Fujitsu is treating genAI as attackable infrastructure, not a product feature

- Enterprises will get something procurement teams can understand: a measurable control layer

For context, Fujitsu has been investing in AI security capabilities beyond this platform, including multi-agent security approaches that coordinate detection and defense (see: Fujitsu multi-AI agent security technology). Kozuchi looks like a packaging of that posture into something deployable.

Guardrails that are actually useful

Fujitsu’s guardrails are aimed at:

- Prompt injection attempts (especially relevant for agentic plus tool-using systems)

- Inappropriate outputs and unexpected behavior

- Runtime suppression and enforcement rather than post-hoc cleanup

In other words: the platform assumes users (or attackers) will try to be clever, and it wants to block the classic failures before they become incident tickets.

If your genAI system can call tools, hit internal docs, or trigger actions, prompt injection is not a theoretical risk. It is the plot.

Customization and in-house tuning

Kozuchi emphasizes in-house fine-tuning so enterprises can adapt models to domain language and workflows while keeping training data internal. That is the correct enterprise posture: the more valuable your data, the less you want it exposed, especially during tuning, evaluation, or agent training loops.

Fujitsu also leans into model optimization and claims dramatic efficiency gains via lightweighting, including memory reduction up to 94% as part of its lightweighting and quantization approach. This is consistent with Fujitsu’s broader messaging around Takane-centered optimization efforts (example coverage: PR Newswire write-up). Practically, this is about making private deployments less painful: fewer GPUs, more predictable costs, more feasible on-prem scenarios.

What enterprises should compare

A platform like this lives or dies by how it performs against the real-world checklist: security posture, integration, and operational sanity. Here is how Kozuchi’s positioning stacks up to the decision criteria most teams are using right now.

| Enterprise requirement | Kozuchi approach | Practical implication |

|---|---|---|

| Data containment | Dedicated environment (on-premises or Fujitsu-provided) | Enables private GPT use cases where public APIs are blocked |

| Operational governance | Central lifecycle management plus auditing | Easier change control, rollback, and ownership clarity |

| Attack resistance | Vulnerability scanning plus runtime guardrails | Better baseline defense for agents and internal integrations |

| Cost control | Lightweighting plus quantization | More models and agents per hardware footprint |

Rollout and availability

Fujitsu says preliminary trial registration begins on February 2, 2026, with features rolling out progressively starting in February and an official launch planned for July 2026, starting sequentially in Japan and Europe. That phased approach is typical for enterprise platforms, but it also suggests Kozuchi is meant to be embedded into real operations, not shipped as a beta playground.

The real implication

Kozuchi Enterprise AI Factory is part of a bigger trend: genAI is becoming infrastructure, and the winners in enterprise adoption will not be the flashiest demos. They will be the stacks that make AI operable.

What Fujitsu is betting on is simple: the next wave of genAI value will not come from giving employees a chatbot. It will come from private, specialized agents that sit inside workflows summarizing internal knowledge, drafting regulated documents, supporting service desks, accelerating engineering, and coordinating multi-step tasks. And those systems cannot be “trust us” products. They need controls that are legible to security, compliance, and IT operations.

Kozuchi does not remove the hard work: data readiness, evaluation design, access control, integration. But it does attempt to standardize the part enterprises hate rebuilding from scratch: the factory floor where private GPTs actually run.