PixVerse is making a loud, very creator-coded statement with PixVerse R1: stop thinking of AI video as generate a clip, wait, redo. Start thinking direct a living scene. The company is previewing R1 as a real-time, interactive world model that keeps continuity while you steer what happens, more like a game engine session than a prompt-to-video vending machine.

That is the headline. The subtext is bigger: if this works at scale, R1 could shift AI video from rendered assets to responsive media, content that updates as fast as you can make decisions. For creators who live in timelines, comments, remixes, and rapid iteration, that is not just a feature. It is a different medium.

AI video has been stuck in clip mode. R1 is pitching world mode: persistent state, instant feedback, and direction-by-interaction.

What PixVerse is previewing

PixVerse frames R1 as a system built to maintain state, presence, and continuity while responding instantly to user input. Instead of a one-and-done output, you are effectively running an ongoing generative stream where changes propagate forward without resetting the scene.

PixVerse’s own write-up positions R1 as a next-generation real-time world model, describing three pillars: an Omni native multimodal foundation model, a Memory component for consistent infinite streaming via an autoregressive mechanism, and an Instantaneous Response Engine designed to reduce latency and keep the loop tight (PixVerse announcement).

Why this matters

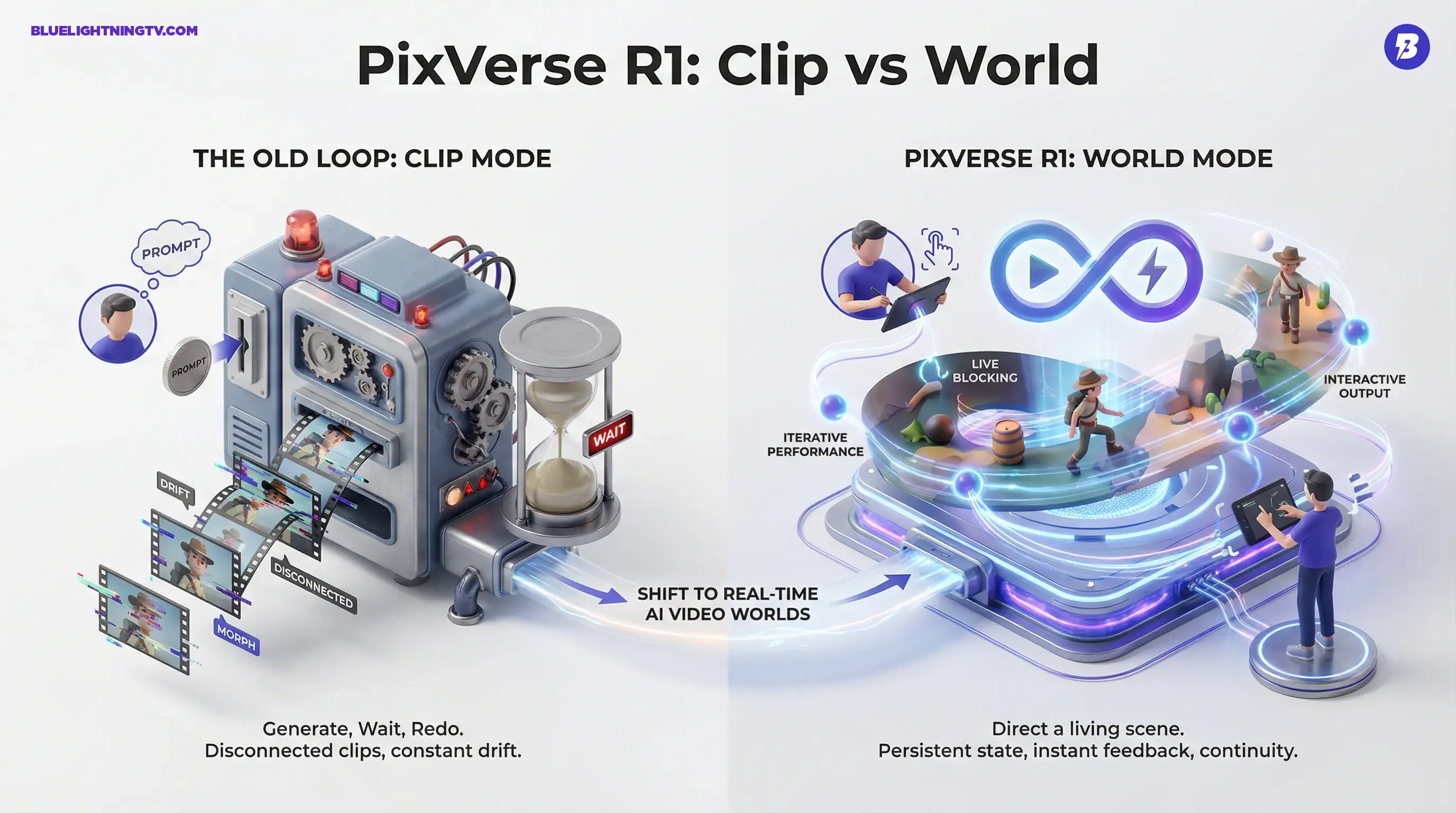

Most AI video tools today are excellent at producing moments, short, impressive clips, then fall apart when you try to produce continuity. Even with character reference features and keep style toggles, creators still spend time fighting drift: faces morph, outfits change, props teleport, and camera logic gets interpretive.

R1 is aimed directly at that pain. If a system can maintain a stable world state while you direct it live, you get new workflows that look less like export, edit, re-render and more like:

- Live blocking: move the character, change the camera, adjust the environment, keep rolling

- Iterative performance: direct the same scene multiple ways without starting from scratch

- Interactive output: content shaped by a creator and an audience in real time

That last point is the creator economy siren song. Interactive media is already how people spend time (games, streams, social). Video that behaves like an interactive system, without traditional production overhead, opens doors for formats that do not fit neatly into post a finished clip.

From clips to worlds

Here is the practical difference between classic prompt-to-video and what PixVerse is describing.

The old loop

You prompt and it renders and you watch and you revise prompt and it renders again.

The problem is not just waiting. It is that each render is a fresh reality unless the tool is unusually good at maintaining context.

The R1 pitch

You are inside a persistent scene. You add direction and the system updates continuously. The world has memory: characters, environment state, and prior actions do not get wiped every time you tweak one thing.

PixVerse is leaning on an autoregressive infinite streaming idea, meaning the system generates forward in time while trying to keep the internal logic consistent as the stream continues (PixVerse R1 overview).

What real-time implies

Real-time is the most abused phrase in tech, so let us pin down what it would mean for creators if R1 delivers.

Creative loop compression

If the feedback is truly immediate or close enough that it feels immediate, you stop working like an editor and start working like a director. That is a big deal for:

- creators who think visually and iterate by watching

- small teams that cannot afford long render cycles

- social content where speed beats perfection

PixVerse says its Instantaneous Response Engine reduces sampling to 1 to 4 steps for low-latency updates and describes real-time 1080p output as a target in its technical materials (technical overview).

Continuity becomes a default

If the world state persists, creators can focus less on please keep the same jacket and more on story beats: blocking, pacing, timing, and comedic rhythm, the stuff audiences actually notice.

Features creators should clock

PixVerse’s positioning highlights a cluster of capabilities that, together, signal a shift from generator to engine.

Persistent world state

The system tracks what is already happened, character identity, object positions, environmental conditions, and tries to keep it coherent as you continue.

Live scene direction

Instead of issuing a new render request, direction becomes incremental: add actions, modify constraints, adjust camera intent.

Multimodal foundation

PixVerse describes an Omni native multimodal foundation model that unifies text, image, audio, and video. For creators, that matters because it hints at smoother handoffs between inputs (reference images, text direction, and audio inputs) rather than bolted-on features.

Developer surface area

PixVerse’s materials describe a platform and API surface (with documented concurrency limits by plan) for developers. If they actually make R1 broadly accessible beyond demos, it is the difference between cool product and ecosystem ingredient.

How it changes workflows

R1’s most realistic impact is not that everyone suddenly makes interactive films. It is that a bunch of everyday creator workflows get faster and less brittle.

Social production gets less linear

Today, iterate often means re-rolling clips until something hits, then stitching in an editor. With a live world, iteration can happen inside the scene: re-block the action, change the beat, adjust the camera, keep the same setting and characters.

Brand content gets more personalized

Interactive video ads are usually expensive because they rely on branching edits and pre-rendered variations. A persistent world model suggests a path toward dynamic variations where the environment can adapt while staying consistent, without building 50 different timelines.

Prototyping becomes playable

If you can play a scene like an interactive sandbox, narrative prototyping starts looking like rapid improv. That is attractive for studios and indie teams testing ideas before committing to full production.

What is still unclear

PixVerse’s preview is ambitious, and ambition is great, but creators should keep expectations grounded until more people can stress-test it.

Latency under real load

Real-time demos can be pristine; real-time at scale is where the dragons live. Performance will matter most when many users are interacting and expecting the same instant feel.

Control vs. chaos

Interactive generation raises the question: how deterministic can it be? Creators will want the ability to lock key elements, character identity, wardrobe, set layout, so live does not mean random.

Output ownership and pipeline fit

Even if the stream is amazing, creators still need to export, cut, version, and publish. How R1 fits into existing post pipelines (or replaces them) will determine whether it becomes a daily driver or a novelty.

Quick comparison table

| Area | Typical AI video | PixVerse R1 direction |

|---|---|---|

| Iteration | Re-render per change | Update within a stream |

| Continuity | Often drifts across clips | Persistent world state |

| Workflow feel | Generate and edit | Direct and steer live |

Access and availability

PixVerse R1 is presented as a preview experience with a dedicated web surface at pixverser1.app. As of the current preview period, access appears limited and can be gated (for example, invite-style access) and the service may place users into a queue during peak load, which tracks with how compute-heavy real-time, stateful video generation would be.

If you are a creator or studio type, the smart move is simple: test whether the world stays stable when you push it, character consistency, object persistence, camera control, and how quickly it recovers from mid-scene changes.

The bigger signal

Whether PixVerse R1 becomes the breakout product or the first visible step in a wider shift, the direction is clear: generative video is trying to graduate from clips to systems. And for creators, that is the difference between making content about an idea and making content that can react to an idea, live, in front of an audience.

If R1 can keep continuity while staying responsive, it is not just a new tool in the stack. It is a new kind of stack.