Alibaba’s Qwen AI team announced Qwen3‑Max‑Preview (Instruct), a preview large language model with over one trillion parameters, now available to use via Qwen Chat and the Alibaba Cloud API. The company says the preview model delivers stronger instruction following, broader knowledge coverage, and more reliable agentic (multi‑step, tool‑using) behaviors than prior Qwen models, with an emphasis that “scaling works.” Details and access are provided in Alibaba Cloud’s Model Studio documentation: Qwen3‑Max‑Preview (Instruct) docs.

What’s new in the preview

Qwen3‑Max‑Preview (Instruct) is positioned as Alibaba’s largest and most capable Qwen model to date. According to the team, it targets:

- More consistent instruction following to reduce re‑prompting and ambiguity

- Broader knowledge breadth for factual lookups across many domains

- Improved multi‑turn dialogue stability over long conversations

- Enhanced agentic performance for planning, tool calling, and multi‑step tasks

Alibaba reports the model surpasses their previous best, Qwen3‑235B‑A22B‑2507, on internal evaluations. Early user feedback through Qwen Chat reportedly points to smoother handling of nuanced requests and more dependable multi‑stage workflows.

Scaling works. That’s the central message from the Qwen team, which says the trillion‑plus parameter scale is producing tangible quality gains that will be pushed further in the official release phase.

How it compares to Qwen3‑235B‑A22B‑2507

For context, Alibaba’s Qwen3 family introduced a step‑change in reasoning and efficiency earlier this year, with the 235B MoE model cited at 22B active parameters per token. The Max‑Preview sits above that line, with Alibaba highlighting gains in instruction adherence, dialog depth, and agent reliability during preview testing. Background on the Qwen3 generation and its design goals is available from Alibaba’s overview of the series: Qwen 3 overview.

| Aspect | Qwen3‑235B‑A22B‑2507 | Qwen3‑Max‑Preview (Instruct) | Notes (Alibaba’s claims) |

|---|---|---|---|

| Model scale | 235B (MoE, ~22B active) | 1T+ parameters (preview) | Emphasis on “scaling works” with quality gains |

| Instruction following | Strong | Stronger | Fewer retries and clearer adherence |

| Multi‑turn conversations | Improved vs. Qwen2.x | Improved further | More stable long‑context dialog |

| Agentic/tool use | Supported | More reliable | Better at planning and sequencing |

| Availability | Qwen Chat, Alibaba Cloud API | Qwen Chat, Alibaba Cloud API | Preview: performance subject to change |

Benchmarks and early signals

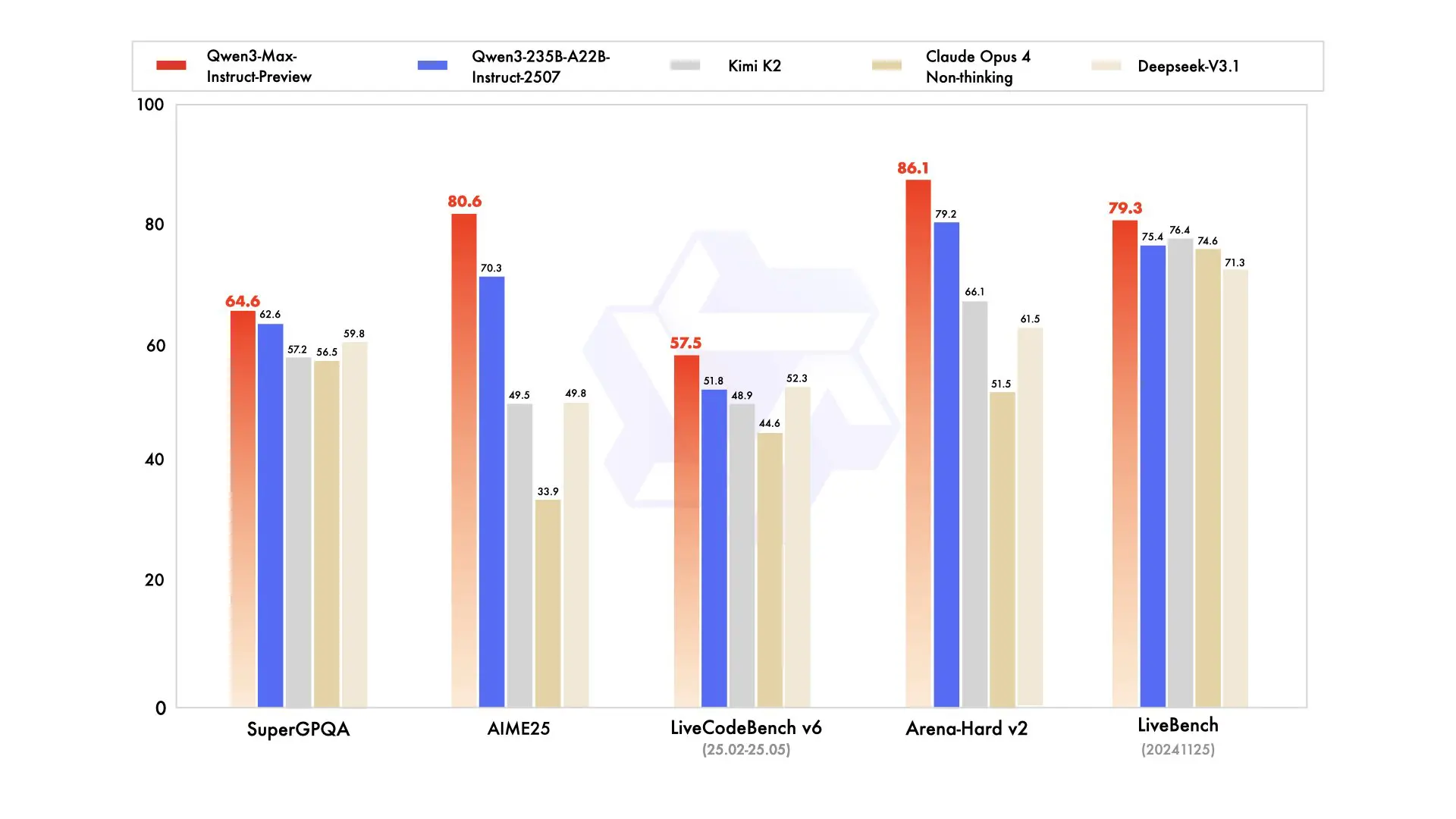

Alibaba shared internal benchmark charts indicating Qwen3‑Max‑Preview outperforms their prior flagship on a suite of instruction‑following, reasoning, and conversation metrics. The team also cites early user feedback indicating noticeable gains in nuanced requests and longer, structured interactions.

Important: these are Alibaba’s own preview‑phase results and early reports, not independent third‑party evaluations. A broader view of how the Qwen3 generation approaches scale, reasoning modes, and efficiency is covered in industry reporting—for context, see VentureBeat’s roundup of Qwen3‑Max’s preview positioning and API availability: Qwen3‑Max arrives in preview.

Why this matters for creators and brand builders

For non‑technical teams building stories, campaigns, and video or photo pipelines, two themes stand out:

- Reliability reduces friction. Better instruction following means fewer prompt iterations to get the copy, storyboard, or brand voice you asked for. That saves time – and for startup teams, time is the budget.

- Agentic workflows unlock scale. If a model can plan, call tools, and hand off steps reliably, creators can chain tasks: from research summaries to mood boards, script outlines, shot lists, and social versions – without micromanaging each step.

This preview aims directly at those friction points. For brand builders and solo founders, improved consistency in long‑form conversations can also help maintain voice across multi‑asset campaigns or multi‑week projects.

Availability and how to access it

Alibaba says Qwen3‑Max‑Preview (Instruct) is immediately accessible in two ways:

- Qwen Chat for hands‑on conversational testing

- Alibaba Cloud API for integration into apps, bots, and creative workflows

Product documentation, model IDs, and setup details are listed here: Qwen3‑Max‑Preview (Instruct) docs.

Positioning in the 2025 model landscape

Qwen3‑Max‑Preview lands amid an intense year of model updates emphasizing reasoning, long‑context handling, and agent capabilities. Alibaba’s broader Qwen3 effort previously spotlighted hybrid reasoning strategies and efficiency improvements across its lineup; see Alibaba’s high‑level Qwen3 overview for design context and series scope: Qwen 3 overview. As the preview progresses, it will be important to compare independent evaluations of Qwen3‑Max‑Preview to peers once third‑party results become available.

For creators: where the gains could show up first

- Brand/marketing content: Clearer adherence to tone, style, and instructions across long threads – helpful when orchestrating multi‑asset launches or ongoing social calendars.

- Video and photo pipelines: More reliable multi‑step planning for pre‑production tasks (research, outlines, shot lists, asset metadata) that can hand off to your production or AI‑generation stack.

- Writing and storytelling: Fewer derails over long drafts; steadier callbacks to earlier plot points, character notes, or messaging pillars across revisions.

- Agentic assistants: Improved orchestration for assistants that query data sources, call APIs, and return structured outputs – useful in research, campaign QA, and content operations.

For readers exploring Qwen’s creative track record, our prior coverage looked at Qwen’s growing role in accessible media generation: How Alibaba’s latest Qwen AI accelerates free AI video generation.

What’s next and what to watch

Alibaba frames this as a preview designed to gather usage data and polish for an “official” release. The company suggests further improvements are planned in the general release, including reinforcement and safety tuning carried forward from the preview. As a result, two threads to watch:

- Independent benchmarks: Expect third‑party evaluations across reasoning, instruction following, agent tasks, and long‑context use to validate or challenge Alibaba’s internal results.

- Agent reliability: Creators and marketers will be watching whether tool‑calling and plan‑execution stability holds up under varied, real‑world workflows.

This is a preview build. Performance claims cited here reflect Alibaba’s internal evaluations and early user feedback, not independent third‑party tests.

Bottom line

With Qwen3‑Max‑Preview (Instruct), Alibaba is making a case that pushing model scale beyond the trillion‑parameter mark can deliver practical gains where creators feel them most: fewer retries, steadier long‑form dialog, and more dependable agentic handoffs. The model is live now in Qwen Chat and via the Alibaba Cloud API, with a fuller release on the way. If your work leans on complex instructions, multi‑turn briefs, or chained tasks across content and brand workflows, this preview is positioned to be worth a look – especially to gauge how “scaling works” translates into day‑to‑day creative reliability.