Zhipu AI (also branded as Z.ai) just open-sourced GLM-Image, a text-to-image model aimed squarely at the stuff creators actually ship: posters, promos, product visuals, and graphics that need real typography without turning into alphabet soup. The official docs are live, and they are not shy about the positioning: GLM-Image is pitched as a production-capable image generator with unusually strong text rendering and layout behavior for an open model. You can start from the source materials here: GLM-Image documentation.

This release matters for two reasons that are not just “yay, another model.” First: GLM-Image is open-weight and widely accessible (including a public model listing on Hugging Face), which instantly puts it on the menu for teams who want controllable, self-hosted image generation. Second: Z.ai says the model was trained end-to-end on Huawei Ascend hardware using MindSpore, a noteworthy infrastructure flex that signals faster iteration cycles and less dependency on the usual GPU supply story.

What actually shipped

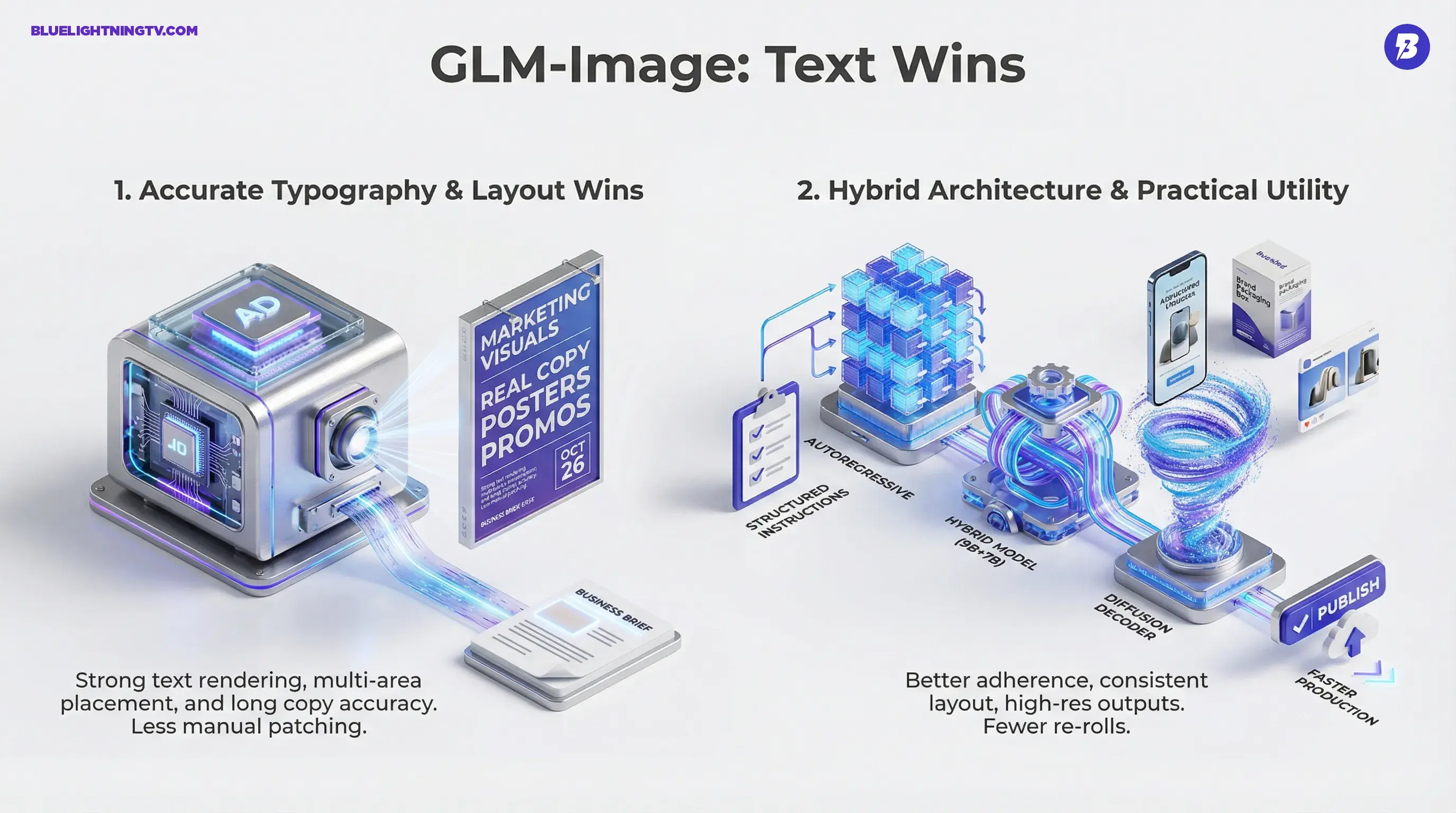

GLM-Image is an image generation model with a hybrid design: an autoregressive generator paired with a diffusion decoder. Z.ai describes it as a 16B-parameter system, and the public model listing reflects that split as 9B autoregressive + 7B diffusion.

If you want the model distribution itself (not just the marketing copy), it is also available through the official Hugging Face repo: zai-org/GLM-Image on Hugging Face.

Here is the key: this is not being positioned as an art toy. It is being positioned as a generator for business visuals, the kind that require stable placement, consistent text blocks, and less “why is the logo melting” behavior.

The signal in this launch is not novelty. It is intent: GLM-Image is trying to be the open model you can use when the deliverable has a headline and a deadline.

Why creators should care

Most text-to-image models are great at vibes and terrible at copy. That is fine for moodboards. It is not fine for a carousel ad with pricing, a thumbnail with a hook, or a poster with a date and venue.

Z.ai is leaning into exactly those needs. In their own materials, they call out strengths around text rendering and structured composition, and they publish text-focused evaluations such as CVTG-2K (multi-region text) and LongText-Bench (long, multi-line text). In Z.ai’s reporting, GLM-Image hits about 0.9116 Word Accuracy on CVTG-2K, and leads open models on LongText-Bench with roughly 0.9524 (English) and 0.9788 (Chinese).

From a creator workflow standpoint, the value is straightforward:

- Fewer re-rolls to get the layout roughly right

- Less manual patching in Photoshop or Figma to fix lettering

- More usable first drafts for internal review and client approval

And yes, you will still polish. But the goal is to get you from “blank canvas” to “approved direction” faster.

The typography problem, addressed

If you have ever tried to generate a poster in a general-purpose diffusion model, you know the pattern:

1) the composition is close

2) the words are not words

3) you end up rebuilding the whole thing with overlays anyway

GLM-Image is explicitly trying to reduce that pain. Based on Z.ai’s own reporting (and echoed by third-party coverage), the model is tuned for long text rendering and multi-area text placement, two failure modes where most models fall apart.

Even better: it is not just English-centric. Z.ai’s LongText-Bench numbers report strong behavior for Chinese text as well.

For teams doing global work, that is not a minor detail. It is the difference between “we can actually use this” and “cool demo, unusable output.”

Hybrid architecture, practical impact

“Autoregressive + diffusion” sounds like something you would ignore and scroll past (valid). But the creator translation is: better adherence + better finish.

Autoregressive components tend to be strong at:

- following structured instructions

- maintaining relationships between elements

- staying aligned to the prompt content

Diffusion decoders tend to be strong at:

- texture and realism

- style flexibility

- high-frequency detail

So the bet here is: make the model understand what you asked for, then render it cleanly.

That is also why this model is being framed as useful for marketing and design outputs, those are the domains where “close enough” is not close enough.

Huawei training matters (but not for the reason you think)

Z.ai and Huawei are emphasizing that GLM-Image was trained on Huawei Ascend using MindSpore. That is a big industry storyline, but for creators the relevance is not geopolitical trivia. It is cadence and availability.

If a lab can train and iterate without relying on the same constrained hardware supply chain as everyone else, you often get:

- faster refresh cycles

- more model variants

- more competitive pricing pressure (especially on API offerings)

On pricing: Z.ai’s pricing page lists an API price of $0.015 per image. See: Z.ai pricing.

For background coverage on the Huawei-trained angle, see: InfoWorld’s report.

Snapshot: what it’s optimized for

| Creator need | GLM-Image signal | Why it matters |

|---|---|---|

| Readable text in images | Text-focused evals emphasize long text and multi-area placement | Posters, thumbnails, carousels stop being “design rebuilds” |

| Prompt adherence | Autoregressive component helps structure and intent | Less time wrestling prompt gymnastics for basic accuracy |

| High-res outputs | Docs support flexible sizes from 512 to 2048 (multiples of 32) | Fewer upscalers and fewer “this can’t ship” moments |

Where this fits right now

GLM-Image lands in a very specific gap in the open ecosystem: a model that is not just chasing aesthetic quality, but chasing design utility.

That puts it in conversation with:

- open models that are strong on style but still fragile on text and layout

- closed models that do better on “ad-ready” outputs but do not give you control, privacy, or custom deployment

The real competitive edge for creators is not “is it prettier than Midjourney.” It is “can I produce 12 variants of a promo graphic with legible copy and consistent structure without rebuilding everything manually.”

On that axis, GLM-Image is making a credible play.

If you want related context on why typography is turning into the real bottleneck for shippable gen images, see our earlier Firefly coverage: Firefly Adds FLUX.2: Better Text, Real Workflows.

Limits to keep in mind

Balanced take: even if GLM-Image is better at typography than typical open models, type is still type. Expect edge cases to remain:

- small font sizes

- stylized fonts

- dense paragraphs

- complicated brand lockups

Also, open-weight does not automatically mean “easy.” Self-hosting and integrating into a workflow still requires:

- hardware planning (the 16B weights are heavy)

- pipeline tooling

- prompt conventions for your team

- QA steps so outputs do not drift off-brand

So yes: this can meaningfully accelerate production. No: it does not eliminate design judgment or finishing work.

The bigger signal

GLM-Image is part of a trend we are going to see more of in 2026: open models getting less artsy demo and more workflow native.

The interesting part is not just that it is open. It is that it is open and targeted at the work that pays bills: ads, product visuals, explainers, branded graphics. If the community validates the typography and layout claims at scale, this will become a reference point: open-weight image generation that is actually comfortable living next to Figma and a content calendar.

And if nothing else, GLM-Image adds pressure across the category: better text rendering and layout control stop being “premium closed-model perks” and start looking like baseline expectations.