Wan‑AI has released Wan2.2‑Animate‑14B, a unified generative model designed for fast, high‑fidelity character animation and character replacement. The model puts holistic motion and expression replication into a single system covering body, face, pose, timing, and scene appearance, with both model weights and inference code available on Hugging Face for teams that need local control or private workflows (Wan2.2‑Animate‑14B on Hugging Face).

What’s new: a single model that does the hard parts together

The headline change is consolidation. Instead of hand‑off chains across pose transfer, face reenactment, and color/lighting fixes, Wan2.2‑Animate‑14B performs end‑to‑end animation from a character image plus a driving video, and replacement by swapping a new subject into an existing shot, while keeping global motion and fine facial cues aligned. That unified treatment reduces flicker, identity drift, and compositing artifacts that typically creep in when multiple tools disagree about pose or timing.

Two modes at a glance

| Mode | Inputs | What it replicates | Typical output | Where it fits |

|---|---|---|---|---|

| Animation | Single character image + driving video | Full‑body pose and timing, head motion, facial expressions | New video of the character moving like the driver | Social shorts, music promos, character tests, pre‑viz |

| Replacement | Source video + target character image | Scene choreography, lighting and color grade, performance beats | Seamless subject swap inside the original scene | Advertising comps, virtual talent, reshoots, UGC personalization |

Holistic replication: motion + micro‑expression fidelity

Across both modes, the model targets a consistent whole‑performance reproduction: the big arcs of the body match the driver’s rhythm and weight shift, while facial reenactment preserves identity, and eye gaze, mouth shape, and transient micro‑expressions track cleanly with minimal jitter. For creators, that means less time patching over uncanny moments and more time refining creative direction.

Identity holds, expressions land, and lighting belongs to the scene out of the box. Wan2.2‑Animate‑14B treats the performance as one coherent thing, not a stack of separate fixes.

Why this matters for creators and brand builders

For video‑first marketers, independent filmmakers, and social teams, a unified animation/replacement model compresses timelines. When motion realism and facial nuance hold up under close‑ups, cuts require fewer “hide the artifact” edits. For startups and solo creators, the ability to produce production‑ready compositing straight from a still or existing shot removes the need for separate motion capture, face retargeting, and heavy roto.

- Speed to storyboard: Generate believable character motion for client approvals and pitch decks without staging multiple test shoots.

- Variant workflows: Swap talent or avatars for regional/localized cuts while maintaining scene fidelity.

- Privacy and control: With weights and inference code available, studios can run on‑prem and meet compliance needs.

Access and availability

Wan‑AI is shipping Wan2.2‑Animate‑14B with multiple access paths for teams that want to evaluate quality, move fast in the browser, or integrate privately in pipelines.

- Model weights + inference code: Available on Hugging Face with documentation for both animation and replacement modes (see link above).

- Try online: A browser demo lets creators test motion following and character swaps without setup (wan.video).

- GitHub repository: Source and reference code for integrating into local workflows (Wan2.2 on GitHub).

The production angle

Instead of assembling a bespoke chain for every project, creative teams can standardize on a single engine that respects scene lighting and grading as it animates or replaces. That end‑to‑end treatment cuts mismatched shadows, color shifts, and temporal noise, the usual giveaway that a composite has been stitched together from multiple models.

In commercial work, that can translate to fewer rounds needed to “sell” the shot. In music videos and brand content, it opens the door to more ambitious camera moves and closer framings without worrying that the face will drift between takes.

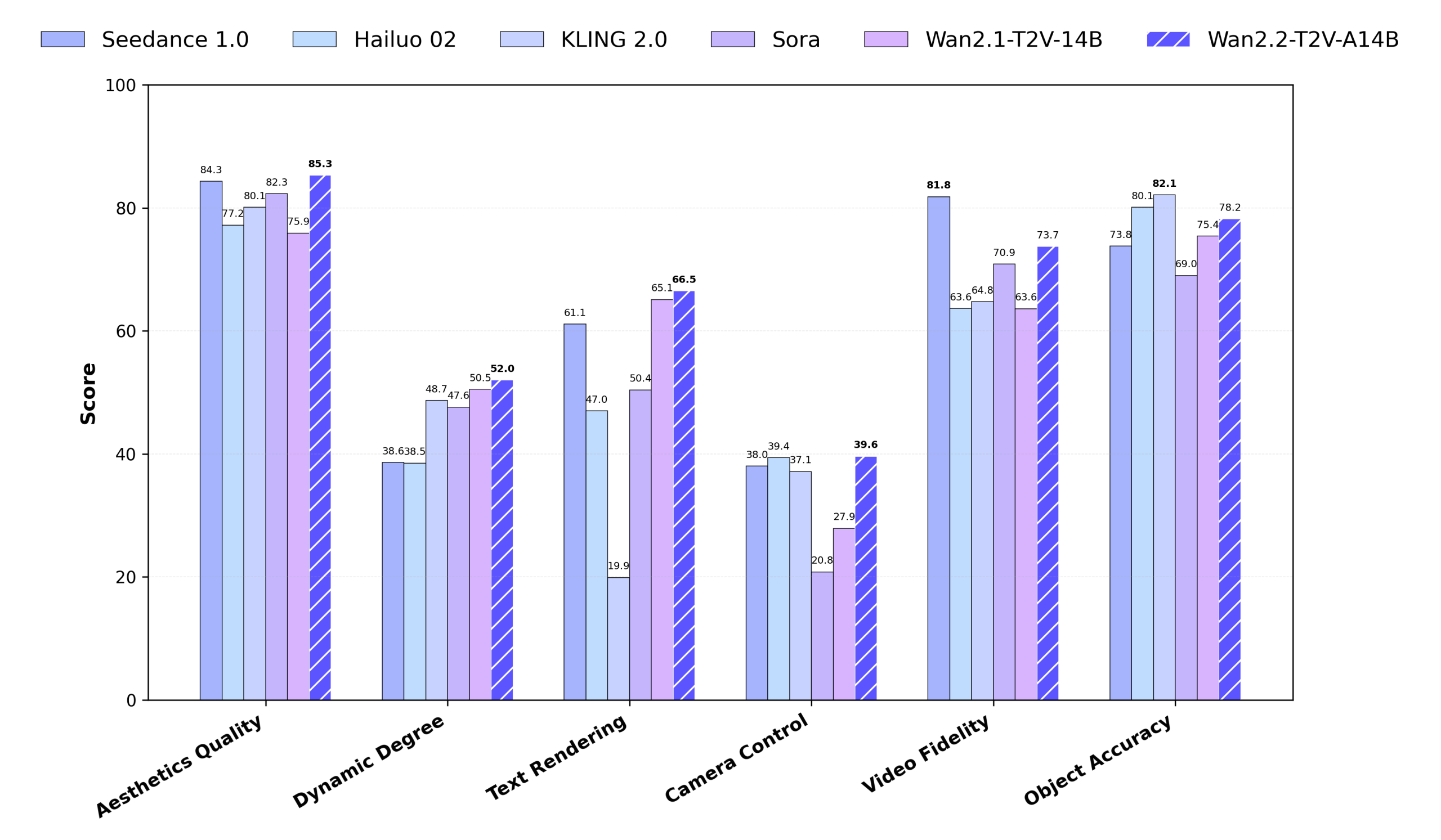

How it fits into today’s AI video landscape

Over the last year, broad video generators have raced ahead on cinematic staging, while niche tools specialize in pose transfer, lip sync, or head replacement. Wan2.2‑Animate‑14B lands in the middle: a domain‑focused model that prioritizes character fidelity and scene integration. For creators, that balance matters. Marketing teams need consistent likeness across a season of assets; storytellers need a character who can emote without breaking between frames; brand builders need subject swaps that honor lighting, not fight it.

Signals for teams evaluating adoption

- Identity stability: Strong preservation across motion‑heavy clips reduces re‑takes and patch shots.

- Close‑up resilience: Facial reenactment and eye/mouth detail retain coherence under tighter crops.

- Scene‑aware composites: Color and lighting align with the original footage, improving believability.

- Open model access: Weights + inference code enable on‑prem deployment, custom data evaluation, and integration into existing edit stacks.

Use cases lighting up first

Early traction is clear in:

- Advertising and social: Fast character variants for A/B creative, localized talent without reshoots, and virtual ambassadors that keep brand visuals consistent across campaigns.

- Pre‑viz and concepting: Rapid motion studies to communicate blocking, beats, and tone before committing to full production.

- Virtual production: On‑model replacement where lighting continuity matters, especially for inserts or pickups.

- UGC and creator tools: One‑click animation or swap experiences that benefit from identity and motion fidelity out of the box.

Constraints and expectations

As with any model in this category, quality is contingent on input quality. Clean reference images and well‑exposed driver clips yield the best motion and expression transfer. And while the rollout makes local inference possible for privacy‑sensitive work, browser demos remain the fastest way to sanity‑check fit and style before deeper integration.

Quick reference: what’s bundled vs. what you bring

| Included in Wan2.2‑Animate‑14B | Provided by the creator | Outcome for your pipeline |

|---|---|---|

| Unified animation + replacement model | Character image(s), driving or source video | Fewer model hand‑offs; less artifact accumulation |

| Facial reenactment tied to global motion | Framing and creative direction | Close‑up‑safe identity and expression stability |

| Scene‑aware color/lighting integration | Original footage’s look/grade | Composites that belong in the shot without extra grading |

| Weights + inference code for local runs | Compute environment and deployment | Private experiments and on‑prem workflows |

Calls to action for teams

For a zero‑setup look at motion fidelity and identity hold, the web demo is the fastest path (see wan.video). Teams that need to validate privately can pull the model and reference code from Hugging Face (see the link at the top of this post) or integrate from the GitHub repository (Wan2.2 on GitHub).

Bottom line

Wan2.2‑Animate‑14B consolidates once‑fragile pipelines into a single model that treats performance as a whole: movement, expression, and scene lighting in concert. For creators, founders, and brand teams, that means fewer trade‑offs between speed and quality, and a straighter path to videos that look and feel finished. With public weights and inference code plus quick browser demos, it is built to meet both experimentation and production needs on day one.